Getting started with Talend Component Kit

Talend Component Kit is a Java framework designed to simplify the development of components at two levels:

-

The Runtime, that injects the specific component code into a job or pipeline. The framework helps unifying as much as possible the code required to run in Data Integration (DI) and BEAM environments.

-

The Graphical interface. The framework helps unifying the code required to render the component in a browser or in the Eclipse-based Talend Studio (SWT).

Most part of the development happens as a Maven project and requires a dedicated tool such as IntelliJ.

The Component Kit is made of:

-

A Starter, that is a graphical interface allowing you to define the skeleton of your development project.

-

APIs to implement components UI and runtime.

-

Development tools: Maven wrappers, validation rules, packaging, Web preview, etc.

-

A testing kit based on JUnit 4 and 5.

By using this tooling in a development environment, you can start creating components as described below.

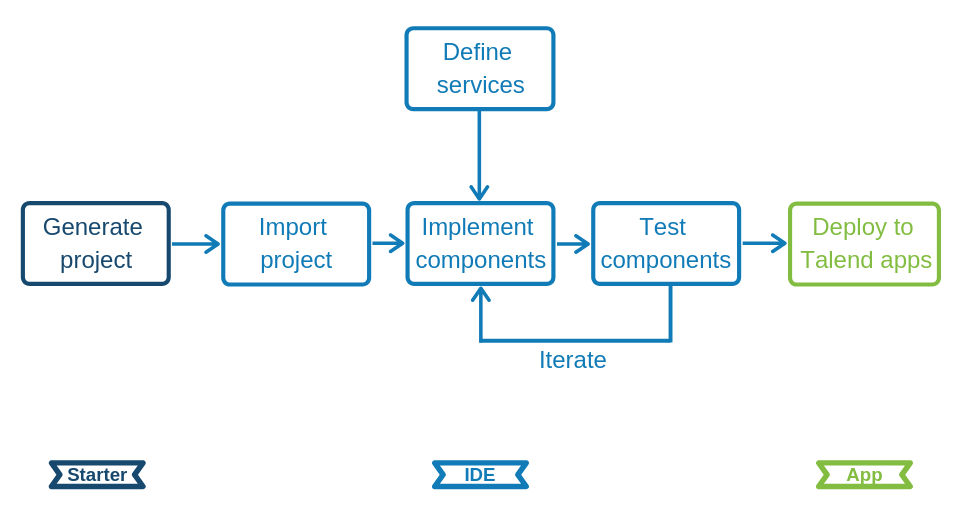

Talend Component Kit methodology

Developing new components using the Component Kit framework includes:

-

Creating a project using the starter or the Talend IntelliJ plugin. This step allows to build the skeleton of the project. It consists in:

-

Defining the general configuration model for each component in your project.

-

Generating and downloading the project archive from the starter.

-

Compiling the project.

-

-

Importing the compiled project in your IDE. This step is not required if you have generated the project using the IntelliJ plugin.

-

Implementing the components, including:

-

Registering the components by specifying their metadata: family, categories, version, icon, type and name.

-

Defining the layout and configurable part of the components.

-

Defining the execution logic of the components, also called runtime.

-

-

Deploying the components to Talend Studio or Cloud applications.

Optionally, you can use services. Services are predefined or user-defined configurations that can be reused in several components.

Component types

There are four types of components, each type coming with its specificities, especially on the runtime side.

-

Input components: Retrieve the data to process from a defined source. An input component is made of:

-

The execution logic of the component, represented by a

Mapperor anEmitterclass. -

The source logic of the component, represented by a

Sourceclass. -

The layout of the component and the configuration that the end-user will need to provide when using the component, defined by a

Configurationclass. All input components must have a dataset specified in their configuration, and every dataset must use a datastore.

-

-

Processors: Process and transform the data. A processor is made of:

-

The execution logic of the component, describing how to process each records or batches of records it receives. It also describes how to pass records to its output connections. This logic is defined in a

Processorclass. -

The layout of the component and the configuration that the end-user will need to provide when using the component, defined by a

Configurationclass.

-

-

Output components: Send the processed data to a defined destination. An output component is made of:

-

The execution logic of the component, describing how to process each records or batches of records it receives. This logic is defined in an

Outputclass. Unlike processors, output components are the last components of the execution and return no data. -

The layout of the component and the configuration that the end-user will need to provide when using the component, defined by a

Configurationclass. All output components must have a dataset specified in their configuration, and every dataset must use a datastore.

-

-

Standalone components: Make a call to the service or run a query on the database. A standalone component is made of:

-

The execution logic of the component, represented by a

DriverRunnerclass. -

The layout of the component and the configuration that the end-user will need to provide when using the component, defined by a

Configurationclass. All input components must have a datastore or dataset specified in their configuration, and every dataset must use a datastore.

-

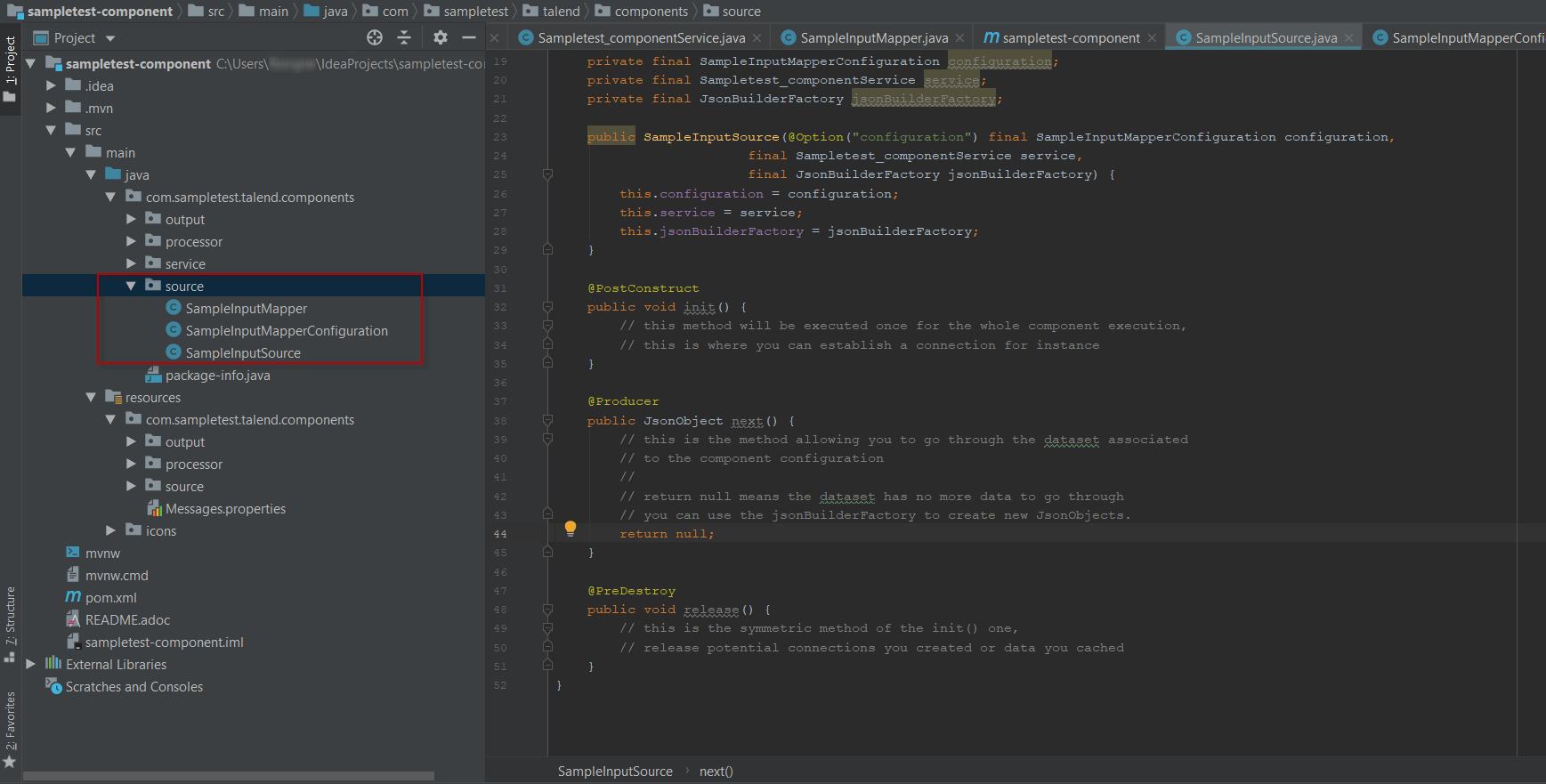

The following example shows the different classes of an input components in a multi-component development project:

Creating your first component

This tutorial walks you through the most common iteration steps to create a component with Talend Component Kit and to deploy it to Talend Open Studio.

The component created in this tutorial is a simple processor that reads data coming from the previous component in a job or pipeline and displays it in the console logs of the application, along with an additional information entered by the final user.

| The component designed in this tutorial is a processor and does not require nor show any datastore and dataset configuration. Datasets and datastores are required only for input and output components. |

Prerequisites

To get your development environment ready and be able to follow this tutorial:

-

Download and install a Java JDK 1.8 or greater.

-

Download and install Talend Open Studio. For example, from Sourceforge.

-

Download and install IntelliJ.

-

Download the Talend Component Kit plugin for IntelliJ. The detailed installation steps for the plugin are available in this document.

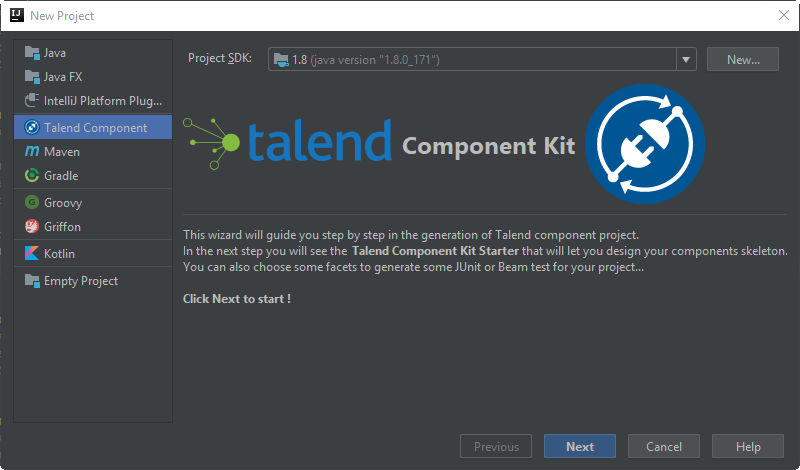

Generate a component project

The first step in this tutorial is to generate a component skeleton using the Starter embedded in the Talend Component Kit plugin for IntelliJ.

-

Start IntelliJ and create a new project. In the available options, you should see Talend Component.

-

Make sure that a Project SDK is selected. Then, select Talend Component and click Next.

The Talend Component Kit Starter opens. -

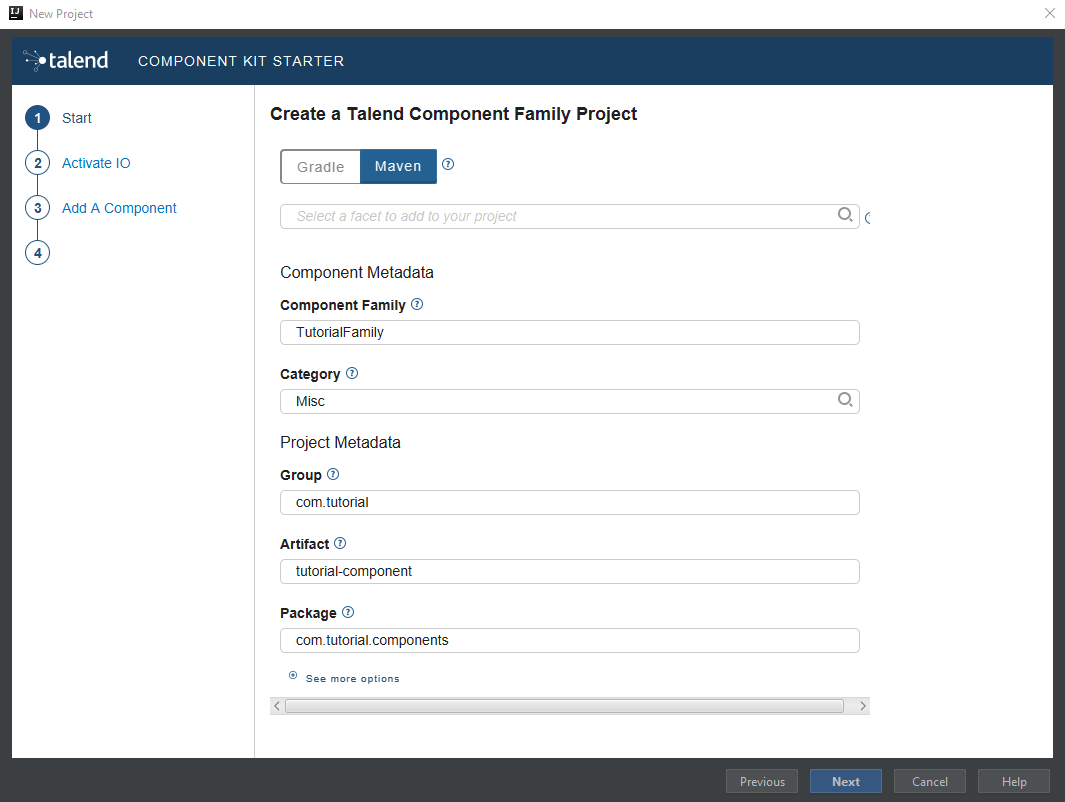

Enter the component and project metadata. Change the default values, for example as presented in the screenshot below:

-

The Component Family and the Category will be used later in Talend Open Studio to find the new component.

-

Project metadata is mostly used to identify the project structure. A common practice is to replace 'company' in the default value by a value of your own, like your domain name.

-

-

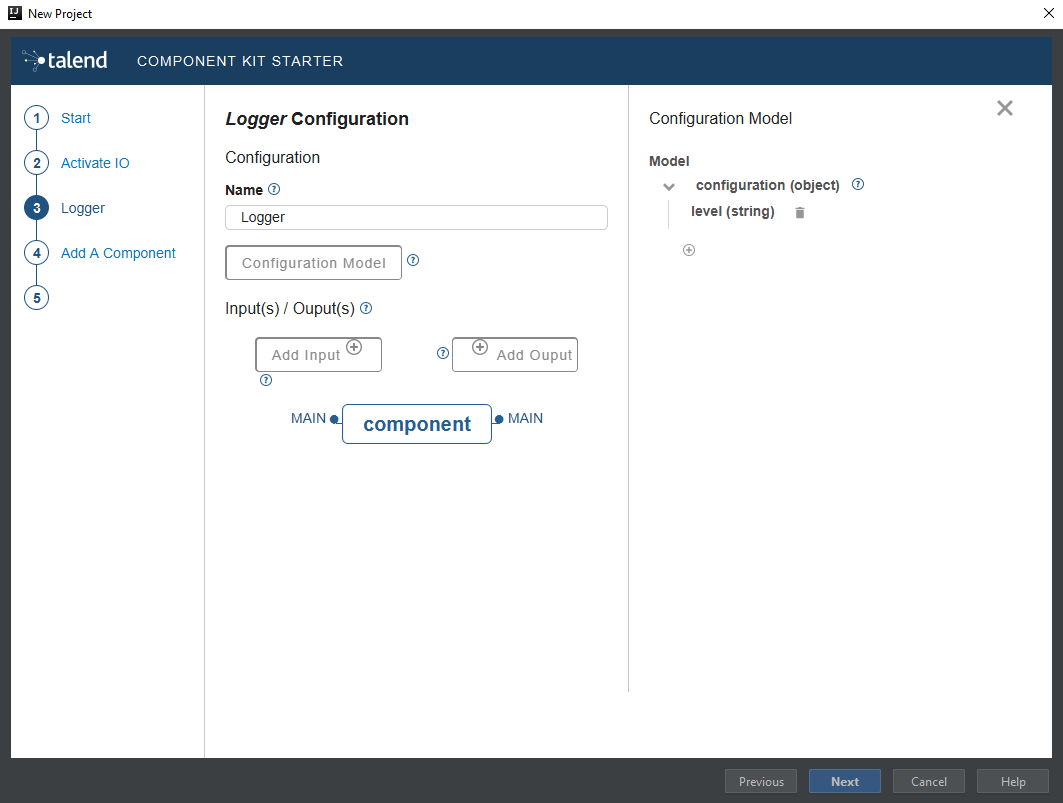

Once the metadata is filled, select Add a component. A new screen is displayed in the Talend Component Kit Starter that lets you define the generic configuration of the component. By default, new components are processors.

-

Enter a valid Java name for the component. For example, Logger.

-

Select Configuration Model and add a string type field named

level. This input field will be used in the component configuration for final users to enter additional information to display in the logs. -

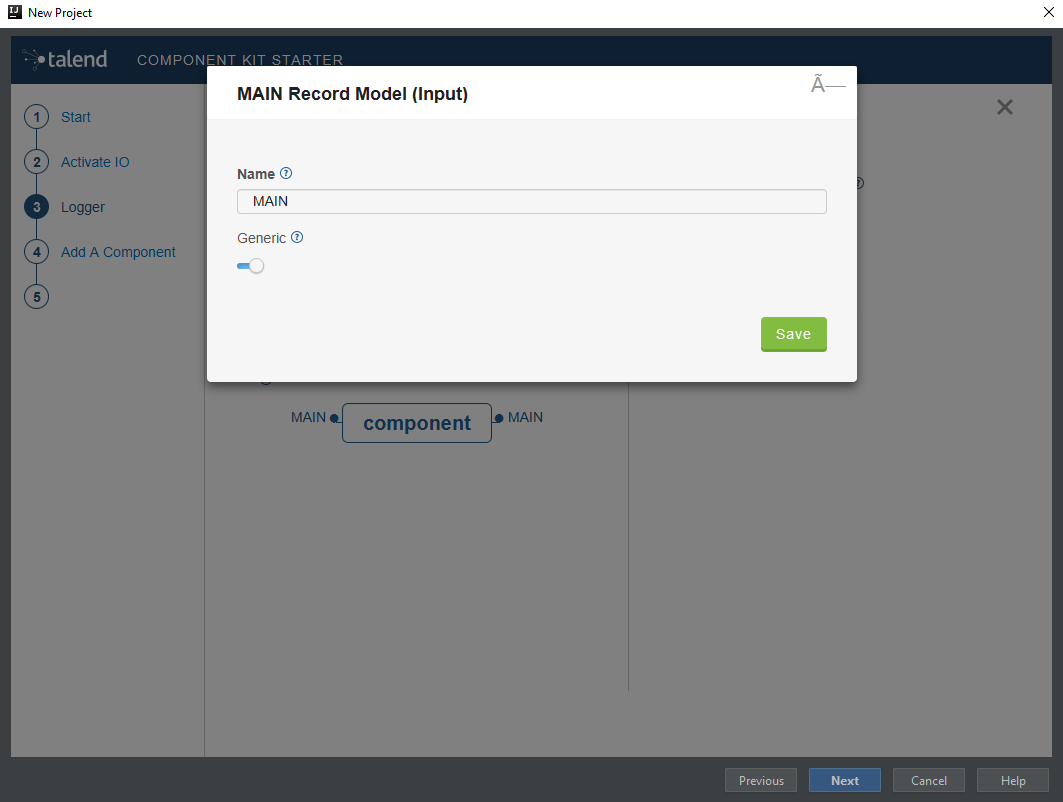

In the Input(s) / Output(s) section, click the default MAIN input branch to access its detail, and make sure that the record model is set to Generic. Leave the Name of the branch with its default

MAINvalue. -

Repeat the same step for the default MAIN output branch.

Because the component is a processor, it has an output branch by default. A processor without any output branch is considered an output component. You can create output components when the Activate IO option is selected. -

Click Next and check the name and location of the project, then click Finish to generate the project in the IDE.

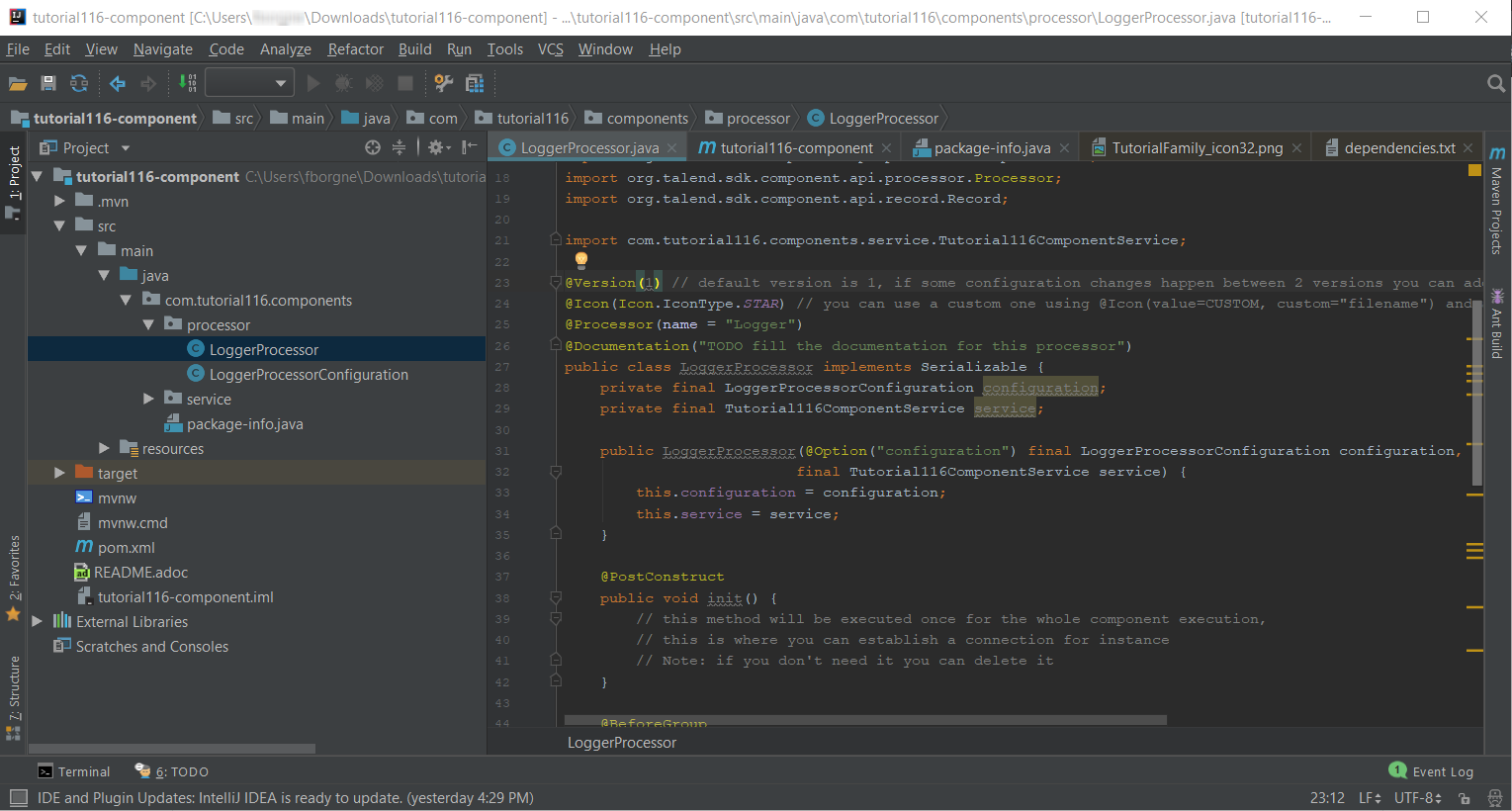

At this point, your component is technically already ready to be compiled and deployed to Talend Open Studio. But first, take a look at the generated project:

-

Two classes based on the name and type of component defined in the Talend Component Kit Starter have been generated:

-

LoggerProcessor is where the component logic is defined

-

LoggerProcessorConfiguration is where the component layout and configurable fields are defined, including the level string field that was defined earlier in the configuration model of the component.

-

-

The package-info.java file contains the component metadata defined in the Talend Component Kit Starter, such as family and category.

-

You can notice as well that the elements in the tree structure are named after the project metadata defined in the Talend Component Kit Starter.

These files are the starting point if you later need to edit the configuration, logic, and metadata of the component.

There is more that you can do and configure with the Talend Component Kit Starter. This tutorial covers only the basics. You can find more information in this document.

Compile and deploy the component to Talend Open Studio

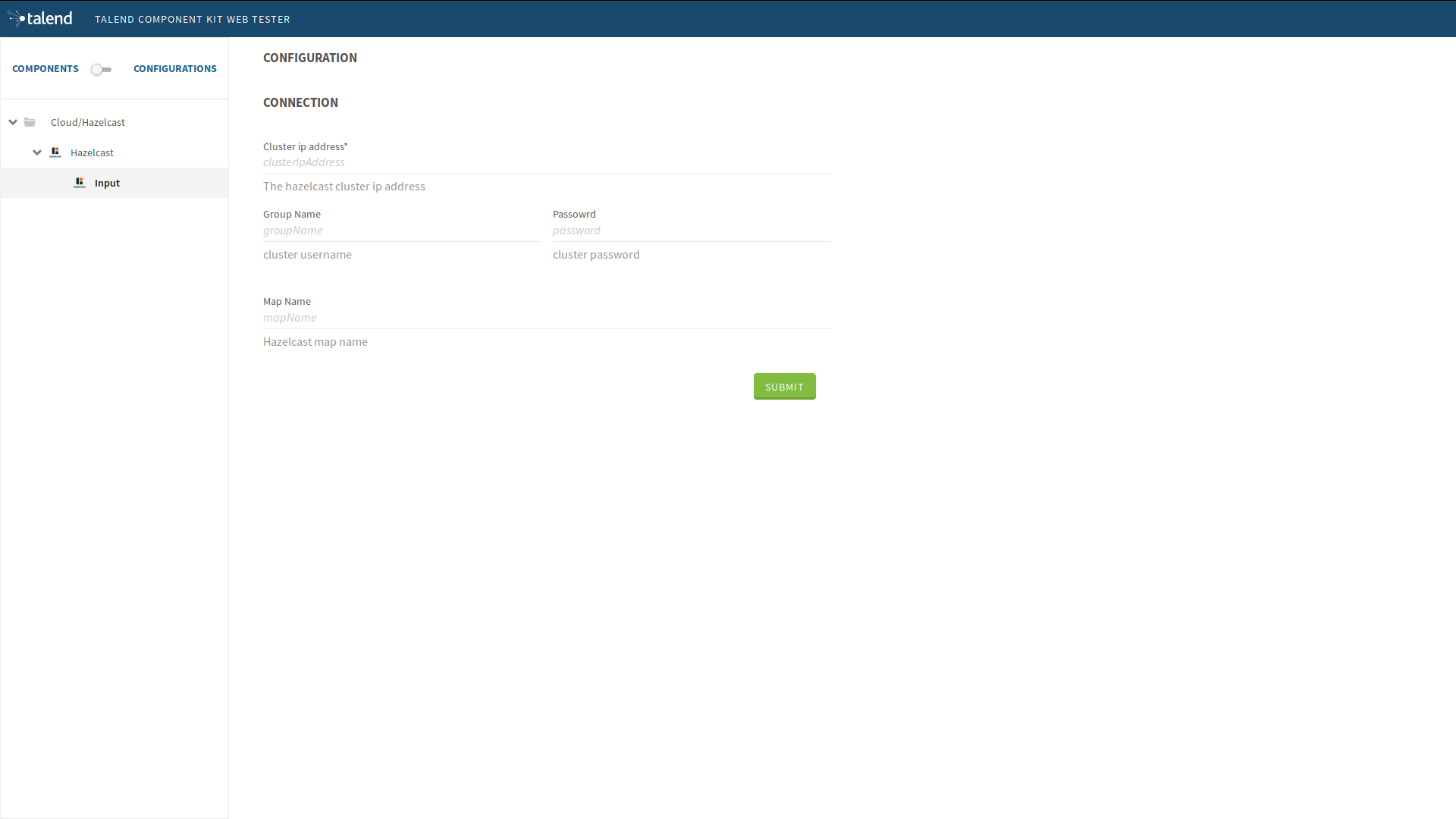

Without modifying the component code generated from the Starter, you can compile the project and deploy the component to a local instance of Talend Open Studio.

The logic of the component is not yet implemented at that stage. Only the configurable part specified in the Starter will be visible. This step is useful to confirm that the basic configuration of the component renders correctly.

Before starting to run any command, make sure that Talend Open Studio is not running.

-

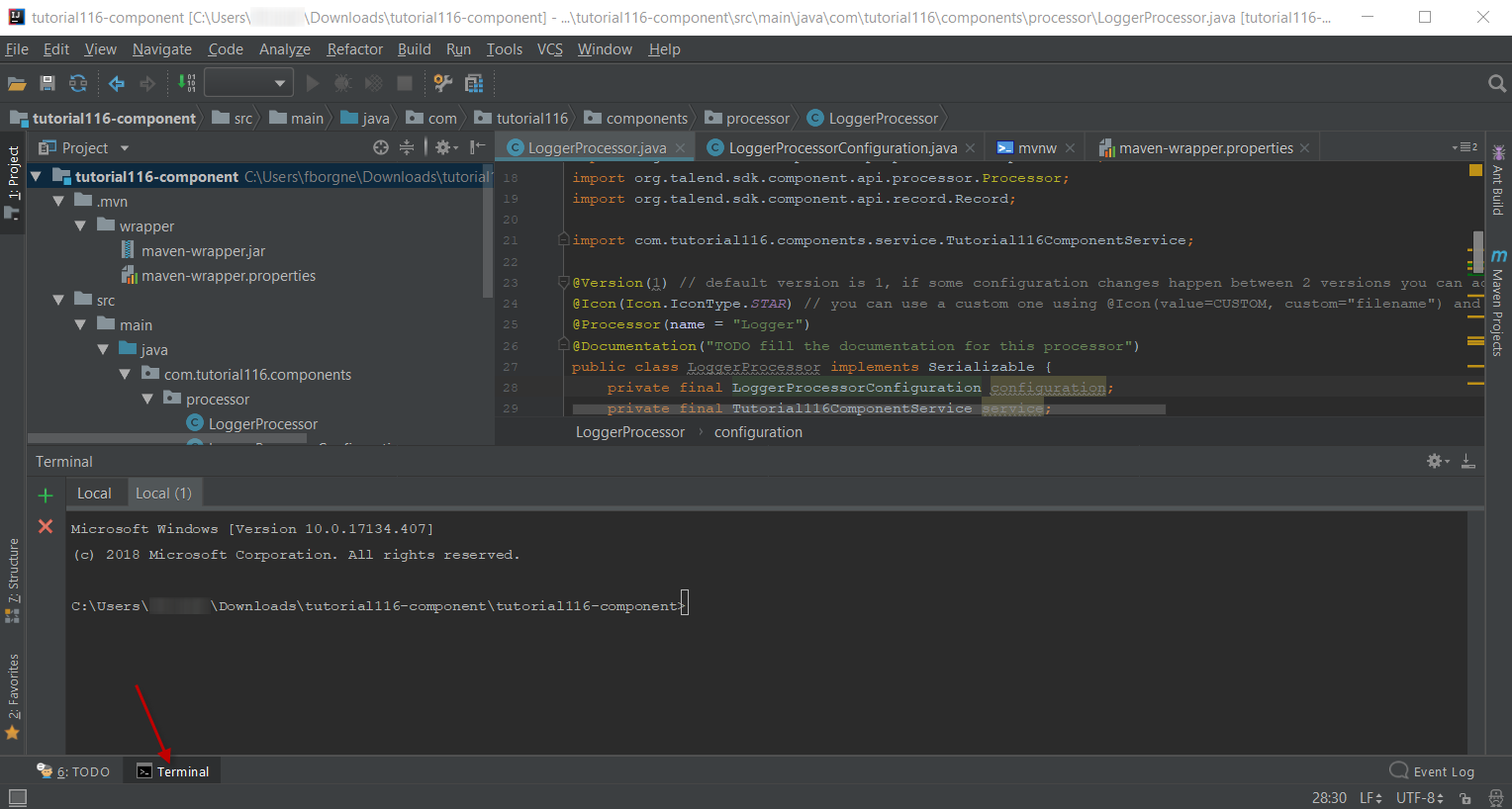

From the component project in IntelliJ, open a Terminal and make sure that the selected directory is the root of the project. All commands shown in this tutorial are performed from this location.

-

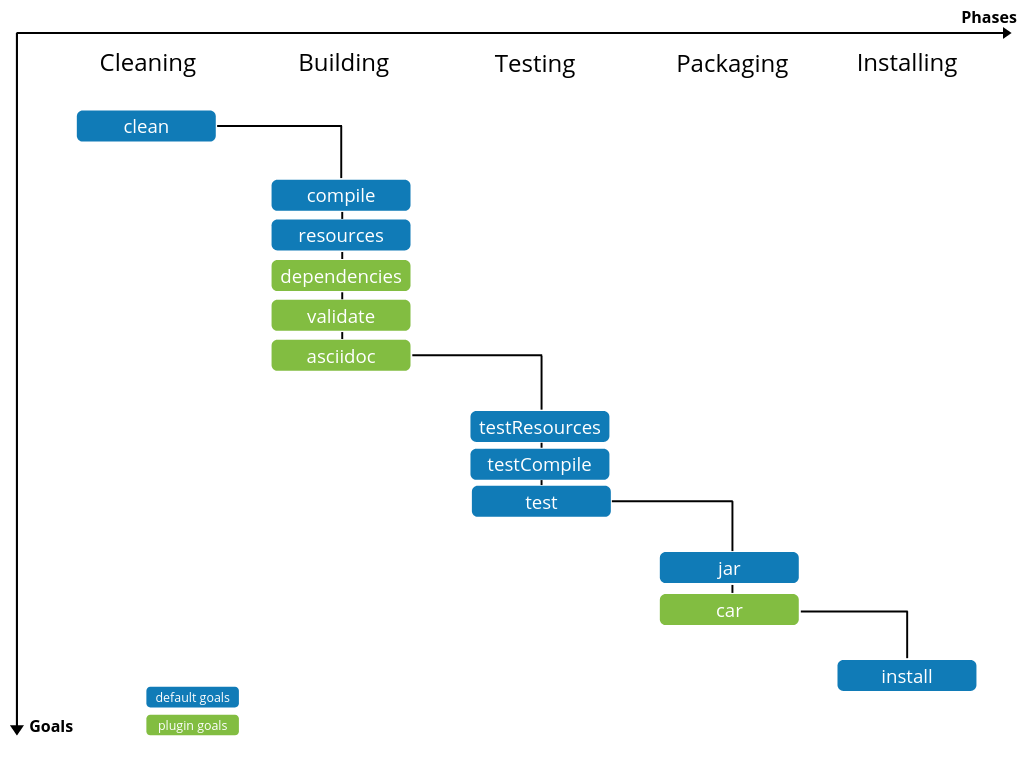

Compile the project by running the following command:

mvnw clean install.

Themvnwcommand refers to the Maven wrapper that is embedded in Talend Component Kit. It allows to use the right version of Maven for your project without having to install it manually beforehand. -

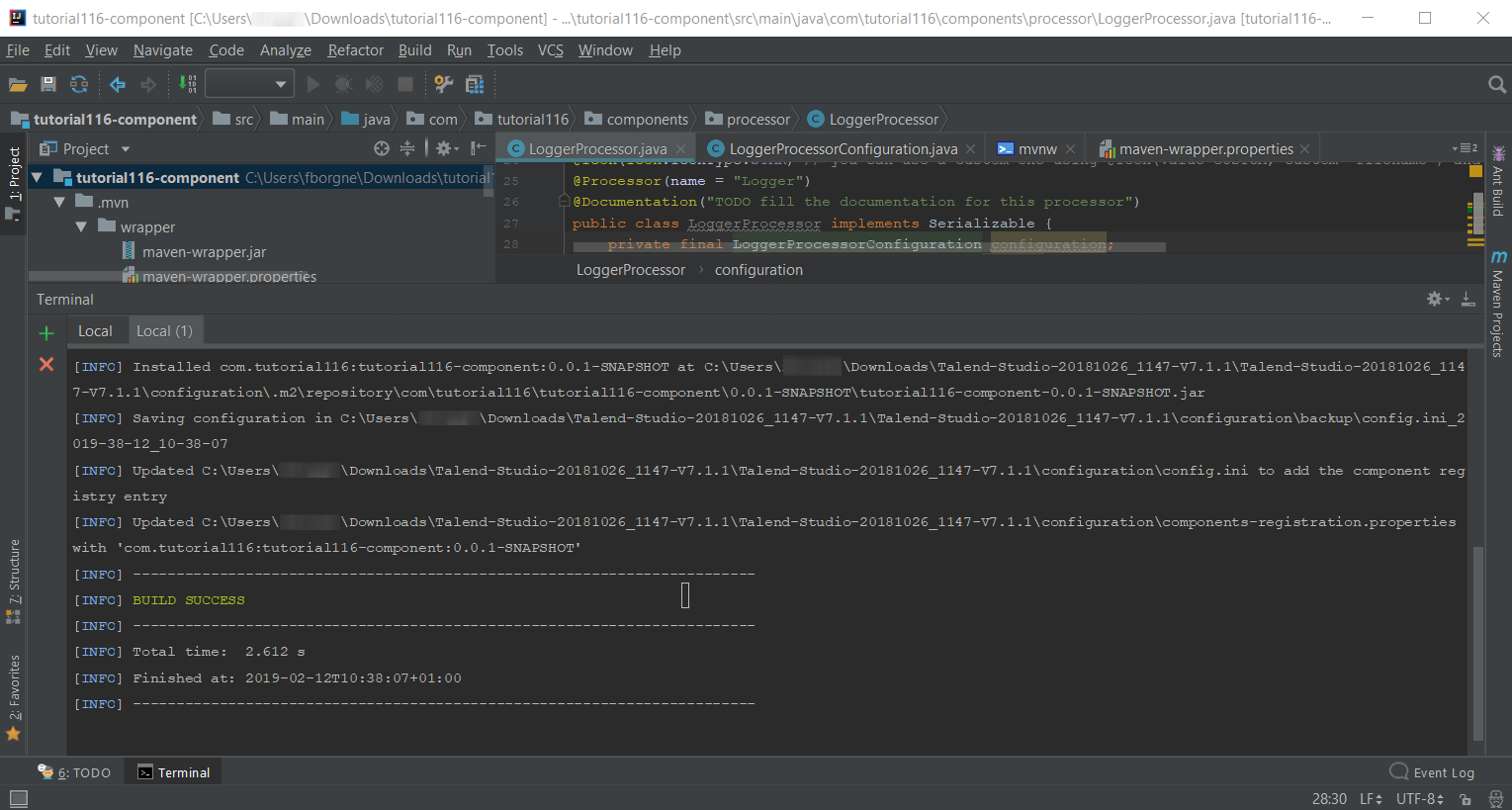

Once the command is executed and you see BUILD SUCCESS in the terminal, deploy the component to your local instance of Talend Open Studio using the following command:

mvnw talend-component:deploy-in-studio -Dtalend.component.studioHome="<path to Talend Open Studio home>".Replace the path with your own value. If the path contains spaces (for example, Program Files), enclose it with double quotes. -

Make sure the build is successful.

-

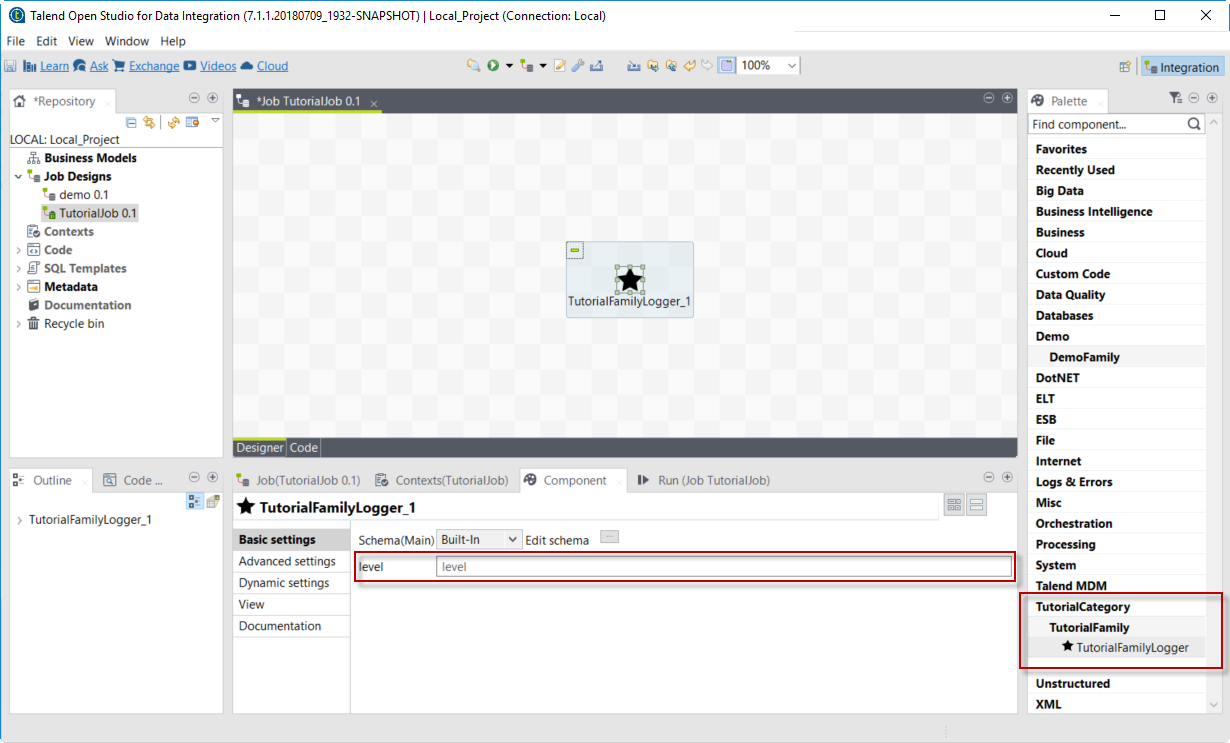

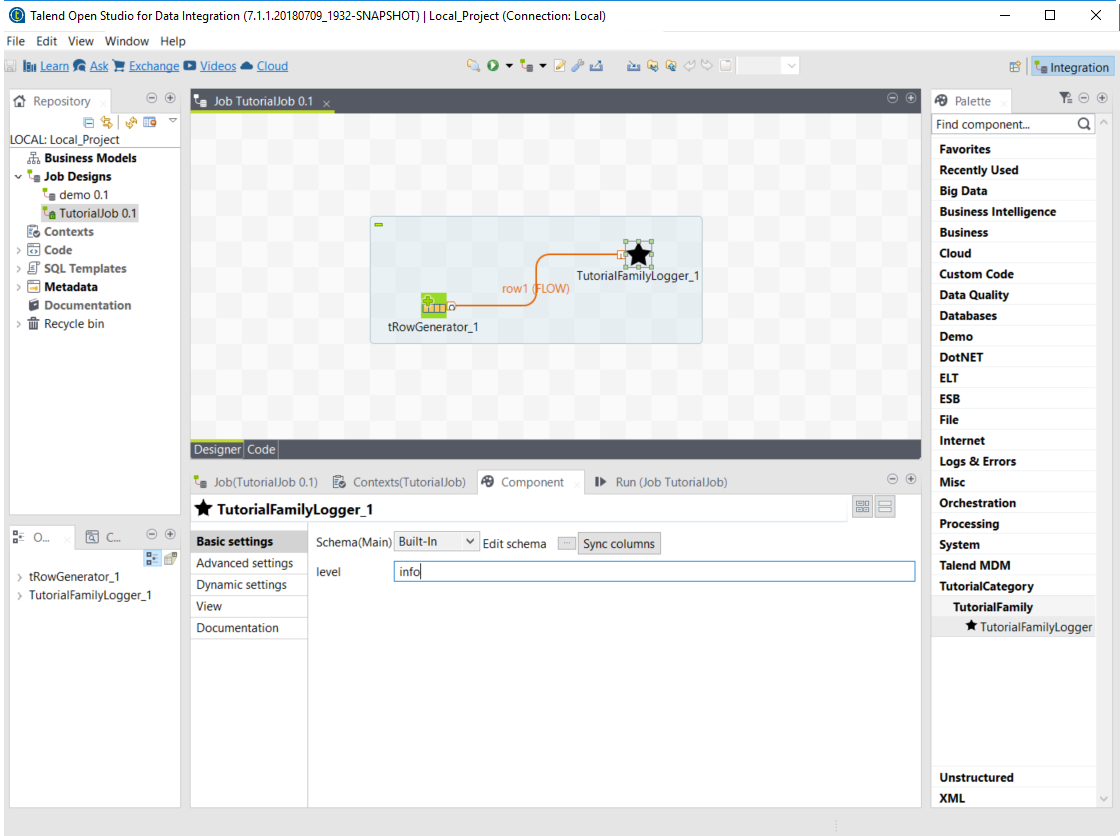

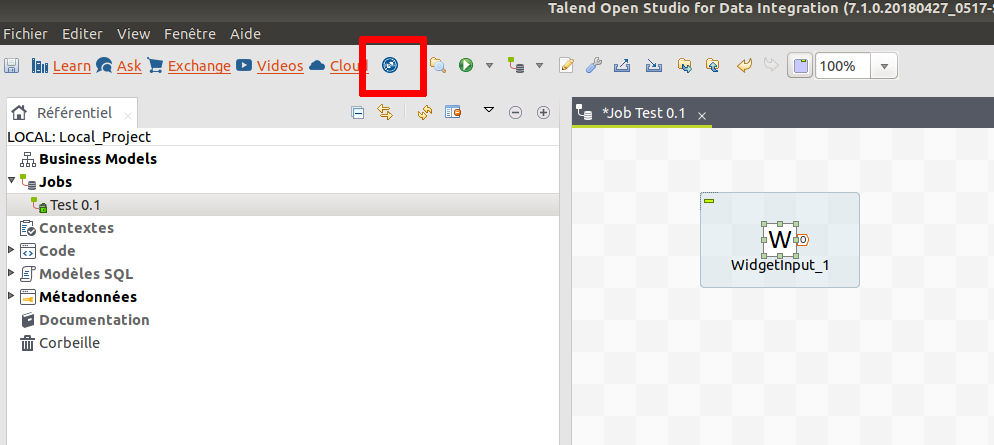

Open Talend Open Studio and create a new Job:

At this point, the new component is available in Talend Open Studio, and its configurable part is already set. But the component logic is still to be defined.

Edit the component

You can now edit the component to implement its logic: reading the data coming through the input branch to display that data in the execution logs of the job. The value of the level field that final users can fill also needs to be changed to uppercase and displayed in the logs.

-

Save the job created earlier and close Talend Open Studio.

-

Go back to the component development project in IntelliJ and open the LoggerProcessor class. This is the class where the component logic can be defined.

-

Look for the

@ElementListenermethod. It is already present and references the default input branch that was defined in the Talend Component Kit Starter, but it is not complete yet. -

To be able to log the data in input to the console, add the following lines:

//Log read input to the console with uppercase level. System.out.println("["+configuration.getLevel().toUpperCase()+"] "+defaultInput);The

@ElementListenermethod now looks as follows:@ElementListener public void onNext( @Input final Record defaultInput) { //Reads the input. //Log read input to the console with uppercase level. System.out.println("["+configuration.getLevel().toUpperCase()+"] "+defaultInput); }

-

Open a Terminal again to compile the project and deploy the component again. To do that, run successively the two following commands:

-

mvnw clean install -

`mvnw talend-component:deploy-in-studio -Dtalend.component.studioHome="<path to Talend Open Studio home>"

-

The update of the component logic should now be deployed. After restarting Talend Open Studio, you will be ready to build a job and use the component for the first time.

To learn the different possibilities and methods available to develop more complex logics, refer to this document.

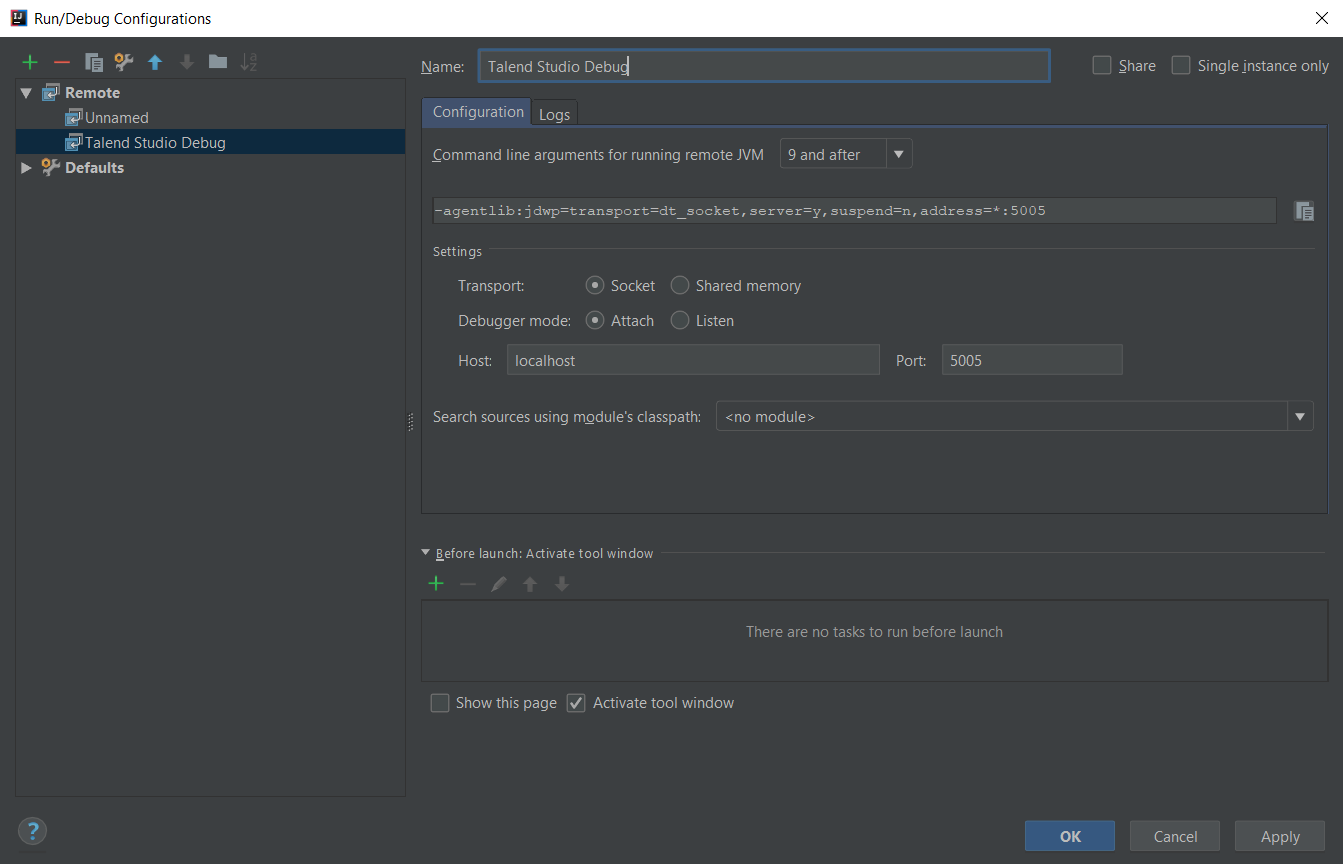

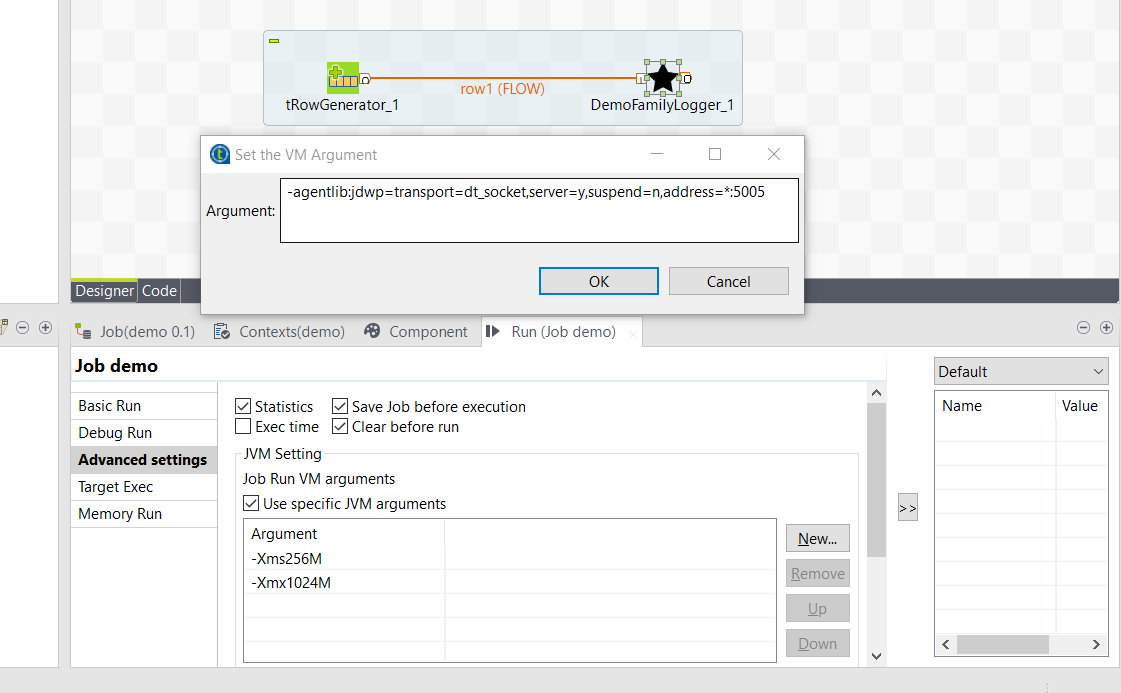

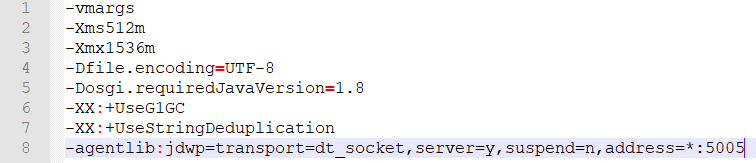

If you want to avoid having to close and re-open Talend Open Studio every time you need to make an edit, you can enable the developer mode, as explained in this document.

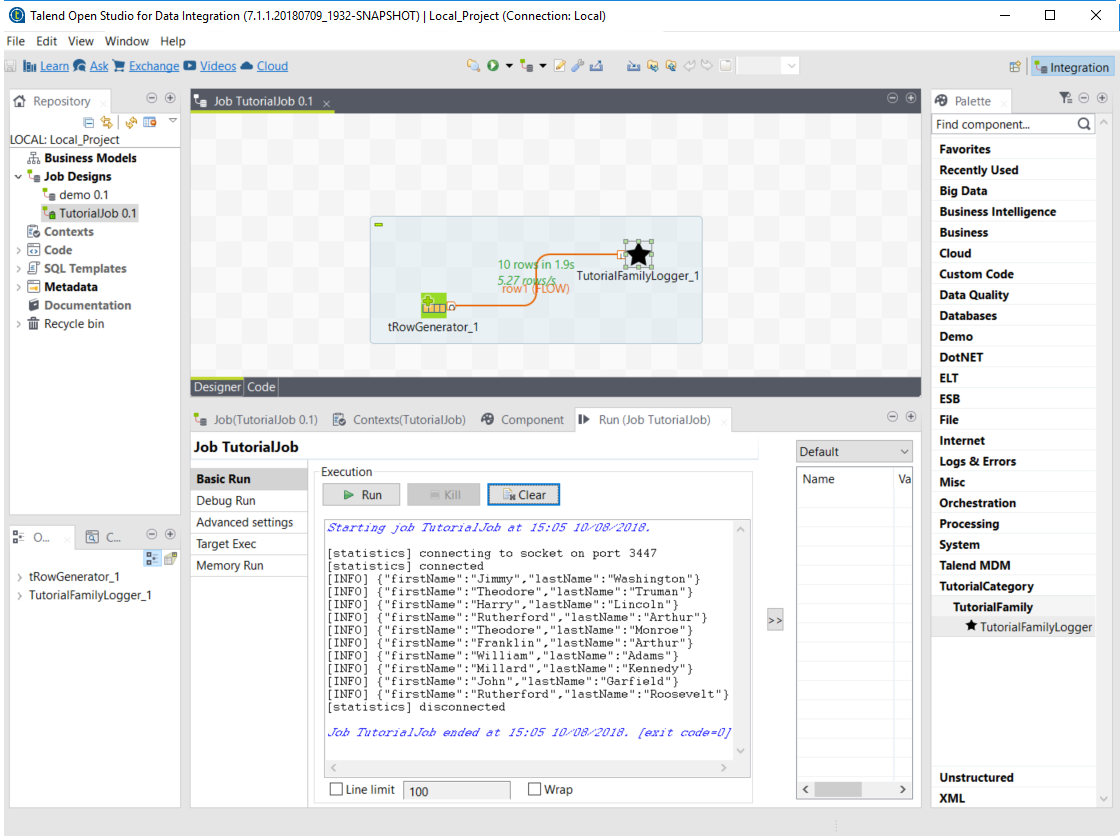

Build a job with the component

As the component is now ready to be used, it is time to create a job and check that it behaves as intended.

-

Open Talend Open Studio again and go to the job created earlier. The new component is still there.

-

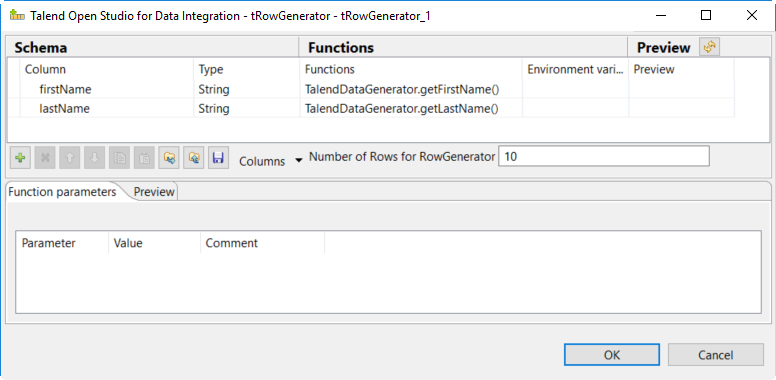

Add a tRowGenerator component and connect it to the logger.

-

Double-click the tRowGenerator to specify the data to generate:

-

Validate the tRowGenerator configuration.

-

Open the TutorialFamilyLogger component and set the level field to

info. -

Go to the Run tab of the job and run the job.

The job is executed. You can observe in the console that each of the 10 generated rows is logged, and that theinfovalue entered in the logger is also displayed with each record, in uppercase.

Record types

Components are designed to manipulate data (access, read, create). Talend Component Kit can handle several types of data, described in this document.

By design, the framework must run in DI (plain standalone Java program) and in Beam pipelines.

It is out of scope of the framework to handle the way the runtime serializes - if needed - the data.

For that reason, it is critical not to import serialization constraints to the stack. As an example, this is one of the reasons why Record or JsonObject were preferred to Avro IndexedRecord.

Any serialization concern should either be hidden in the framework runtime (outside of the component developer scope) or in the runtime integration with the framework (for example, Beam integration).

Record

Record is the default format. It offers many possibilities and can evolve depending on the Talend platform needs. Its structure is data-driven and exposes a schema that allows to browse it.

Projects generated from the Talend Component Kit Starter are by default designed to handle this format of data.

Record is a Java interface but never implement it yourself to ensure compatibility with the different Talend products. Follow the guidelines below.

|

Creating a record

You can build records using the newRecordBuilder method of the RecordBuilderFactory (see here).

For example:

public Record createRecord() {

return factory.newRecordBuilder()

.withString("name", "Gary")

.withDateTime("date", ZonedDateTime.of(LocalDateTime.of(2011, 2, 6, 8, 0), ZoneId.of("UTC")))

.build();

}In the example above, the schema is dynamically computed from the data. You can also do it using a pre-built schema, as follows:

public Record createRecord() {

return factory.newRecordBuilder(myAlreadyBuiltSchemaWithSchemaBuilder)

.withString("name", "Gary")

.withDateTime("date", ZonedDateTime.of(LocalDateTime.of(2011, 2, 6, 8, 0), ZoneId.of("UTC")))

.build();

}The example above uses a schema that was pre-built using factory.newSchemaBuilder(Schema.Type.RECORD).

When using a pre-built schema, the entries passed to the record builder are validated. It means that if you pass a null value null or an entry type that does not match the provided schema, the record creation fails. It also fails if you try to add an entry which does not exist or if you did not set a not nullable entry.

| Using a dynamic schema can be useful on the backend but can lead users to more issues when creating a pipeline to process the data. Using a pre-built schema is more reliable for end-users. |

Accessing and reading a record

You can access and read data by relying on the getSchema method, which provides you with the available entries (columns) of a record. The Entry exposes the type of its value, which lets you access the value through the corresponding method. For example, the Schema.Type.STRING type implies using the getString method of the record.

For example:

public void print(final Record record) {

final Schema schema = record.getSchema();

// log in the natural type

schema.getEntries()

.forEach(entry -> System.out.println(record.get(Object.class, entry.getName())));

// log only strings

schema.getEntries().stream()

.filter(e -> e.getType() == Schema.Type.STRING)

.forEach(entry -> System.out.println(record.getString(entry.getName())));

}Supported data types

The Record format supports the following data types:

-

String

-

Boolean

-

Int

-

Long

-

Float

-

Double

-

DateTime

-

Array

-

Bytes

-

Record

| A map can always be modelized as a list (array of records with key and value entries). |

For example:

public Record create() {

final Record address = factory.newRecordBuilder()

.withString("street", "Prairie aux Ducs")

.withString("city", "Nantes")

.withString("country", "FRANCE")

.build();

return factory.newRecordBuilder()

.withBoolean("active", true)

.withInt("age", 33)

.withLong("duration", 123459)

.withFloat("tolerance", 1.1f)

.withDouble("balance", 12.58)

.withString("name", "John Doe")

.withDateTime("birth", ZonedDateTime.now())

.withRecord(

factory.newEntryBuilder()

.withName("address")

.withType(Schema.Type.RECORD)

.withComment("The user address")

.withElementSchema(address.getSchema())

.build(),

address)

.withArray(

factory.newEntryBuilder()

.withName("permissions")

.withType(Schema.Type.ARRAY)

.withElementSchema(factory.newSchemaBuilder(Schema.Type.STRING).build())

.build(),

asList("admin", "dev"))

.build();

}Example: discovering a schema

For example, you can use the API to provide the schema. The following method needs to be implemented in a service.

Manually constructing the schema without any data:

@DiscoverSchema

getSchema(@Option MyDataset dataset) {

return factory.newSchemaBuilder(Schema.Type.RECORD)

.withEntry(factory.newEntryBuilder().withName("id").withType(Schema.Type.LONG).build())

.withEntry(factory.newEntryBuilder().withName("name").withType(Schema.Type.STRING).build())

.build();

}Returning the schema from an already built record:

@DiscoverSchema

public Schema guessSchema(@Option MyDataset dataset, final MyDataLoaderService myCustomService) {

return myCustomService.loadFirstData().getRecord().getSchema();

}

MyDataset is the class that defines the dataset. Learn more about datasets and datastores in this document.

|

Authorized characters in entry names

Entry names for Record and JsonObject types must comply with the following rules:

-

The name must start with a letter or with

_. If not, the invalid characters are ignored until the first valid character. -

Following characters of the name must be a letter, a number, or

. If not, the invalid character is replaced with.

For example:

-

1foobecomesfoo. -

f@obecomesf_o. -

1234f5@obecomes___f5_o. -

foo123staysfoo123.

JsonObject

The runtime also supports JsonObject as input and output component type. You can rely on the JSON services (Jsonb, JsonBuilderFactory) to create new instances.

This format is close to the Record format, except that it does not natively support the Datetime type and has a unique Number type to represent Int, Long, Float and Double types. It also does not provide entry metadata like nullable or comment, for example.

It also inherits the Record format limitations.

Setting up your environment

Before being able to develop components using Talend Component Kit, you need the right system configuration and tools.

Although Talend Component Kit comes with some embedded tools, such as Maven wrappers, you still need to prepare your system. A Talend Component Kit plugin for IntelliJ is also available and allows to design and generate your component project right from IntelliJ.

System prerequisites

In order to use Talend Component Kit, you need the following tools installed on your machine:

-

Java JDK 1.8.x. You can download it from Oracle website.

-

Talend Open Studio to integrate your components.

-

A Java Integrated Development Environment such as Eclipse or IntelliJ. IntelliJ is recommended as a Talend Component Kit plugin is available.

-

Optional: If you use IntelliJ, you can install the Talend Component Kit plugin for IntelliJ.

-

Optional: A build tool:

-

Apache Maven 3.5.4 is recommended to develop a component or the project itself. You can download it from Apache Maven website.

It is optional to install a build tool independently since Maven wrappers are already available with Talend Component Kit.

-

Installing the Talend Component Kit IntelliJ plugin

The Talend Component Kit IntelliJ plugin is a plugin for the IntelliJ Java IDE. It adds support for the Talend Component Kit project creation.

Main features:

-

Project generation support.

-

Internationalization completion for component configuration.

Installing the IntelliJ plugin

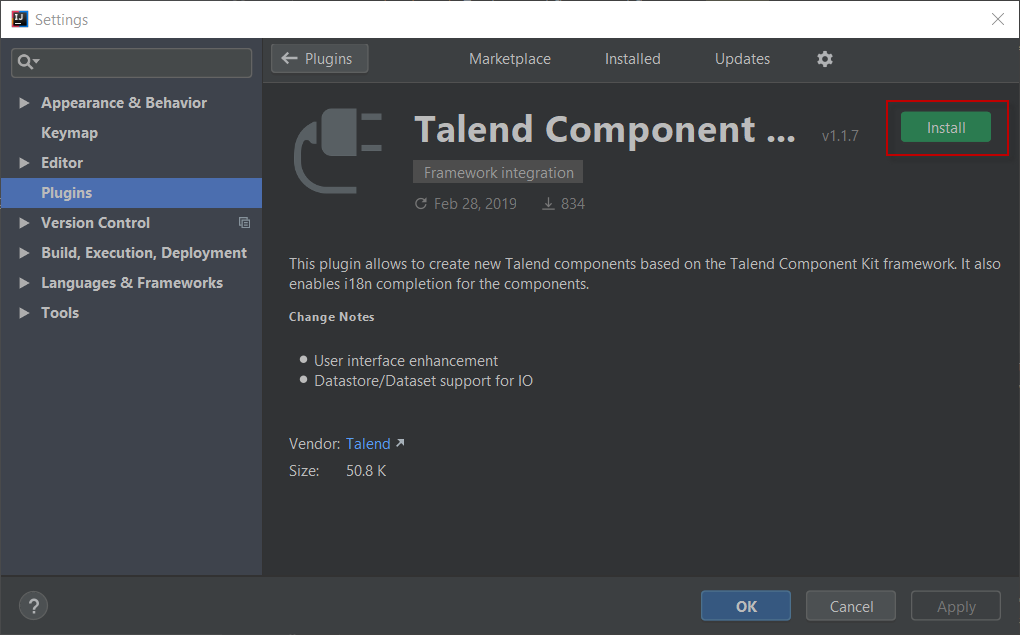

In the Intellij IDEA:

-

Go to File > Settings…

-

On the left panel, select Plugins.

-

Access the Marketplace tab.

-

Enter

Talendin the search field and Select Talend Component Kit. -

Select Install.

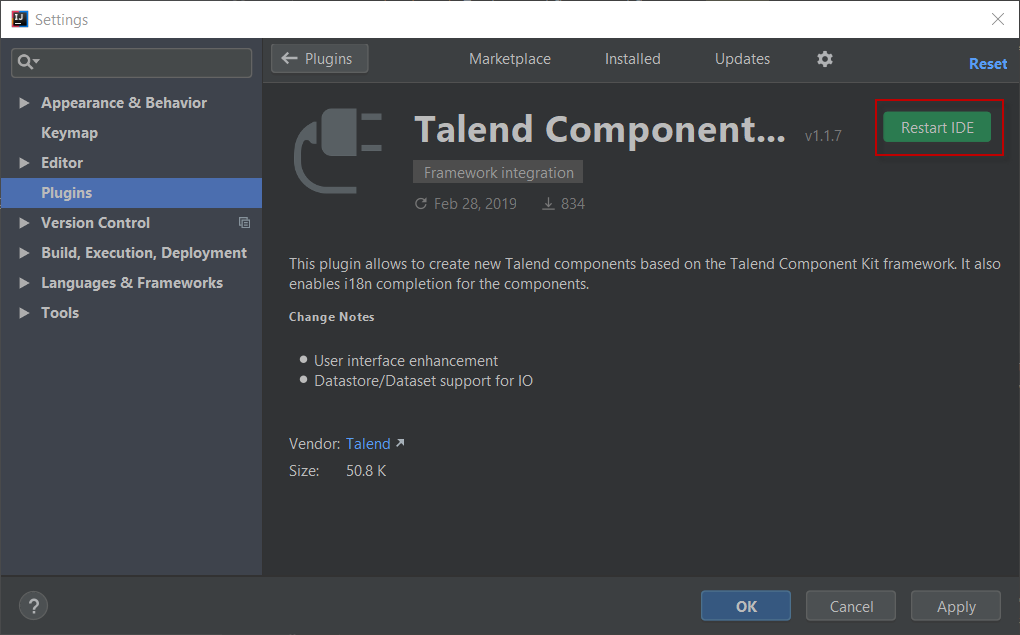

-

Click the Restart IDE button.

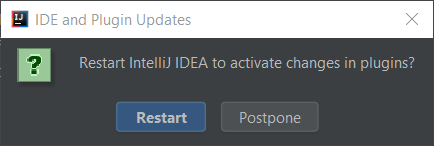

-

Confirm the IDEA restart to complete the installation.

The plugin is now installed on your IntelliJ IDEA. You can start using it.

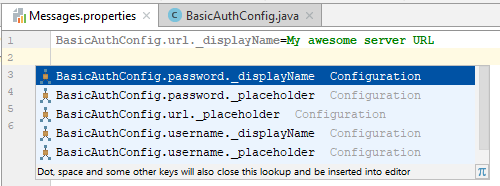

About the internationalization completion

The plugin offers auto-completion for the configuration internationalization. The Talend component configuration lets you setup translatable and user-friendly labels for your configuration using a property file. Auto-completion in possible for the configuration keys and default values in the property file.

For example, you can internationalize a simple configuration class for a basic authentication that you use in your component:

@Checkable("basicAuth")

@DataStore("basicAuth")

@GridLayout({

@GridLayout.Row({ "url" }),

@GridLayout.Row({ "username", "password" }),

})

public class BasicAuthConfig implements Serializable {

@Option

private String url;

@Option

private String username;

@Option

@Credential

private String password;

}This configuration class contains three properties which you can attach a user-friendly label to.

For example, you can define a label like My server URL for the url option:

-

Locate or create a

Messages.propertiesfile in the project resources and add the label to that file. The plugin automatically detects your configuration and provides you with key completion in the property file. -

Press Ctrl+Space to see the key suggestions.

Generating a project

The first step when developing new components is to create a project that will contain the skeleton of your components and set you on the right track.

The project generation can be achieved using the Talend Component Kit Starter or the Talend Component Kit plugin for IntelliJ.

Through a user-friendly interface, you can define the main lines of your project and of your component(s), including their name, family, type, configuration model, and so on.

Once completed, all the information filled are used to generate a project that you will use as starting point to implement the logic and layout of your components, and to iterate on them.

Once your project is generated, you can start implementing the component logic.

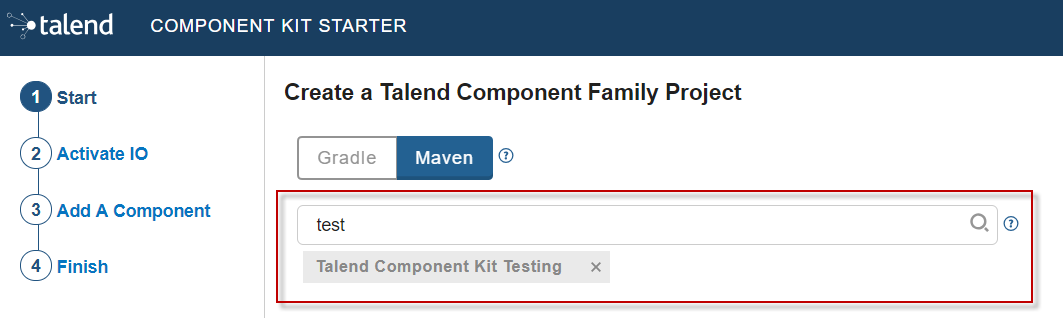

Generating a project using the Component Kit Starter

The Component Kit Starter lets you design your components configuration and generates a ready-to-implement project structure.

The Starter is available on the web or as an IntelliJ plugin.

This tutorial shows you how to use the Component Kit Starter to generate new components for MySQL databases. Before starting, make sure that you have correctly setup your environment. See this section.

| When defining a project using the Starter, do not refresh the page to avoid losing your configuration. |

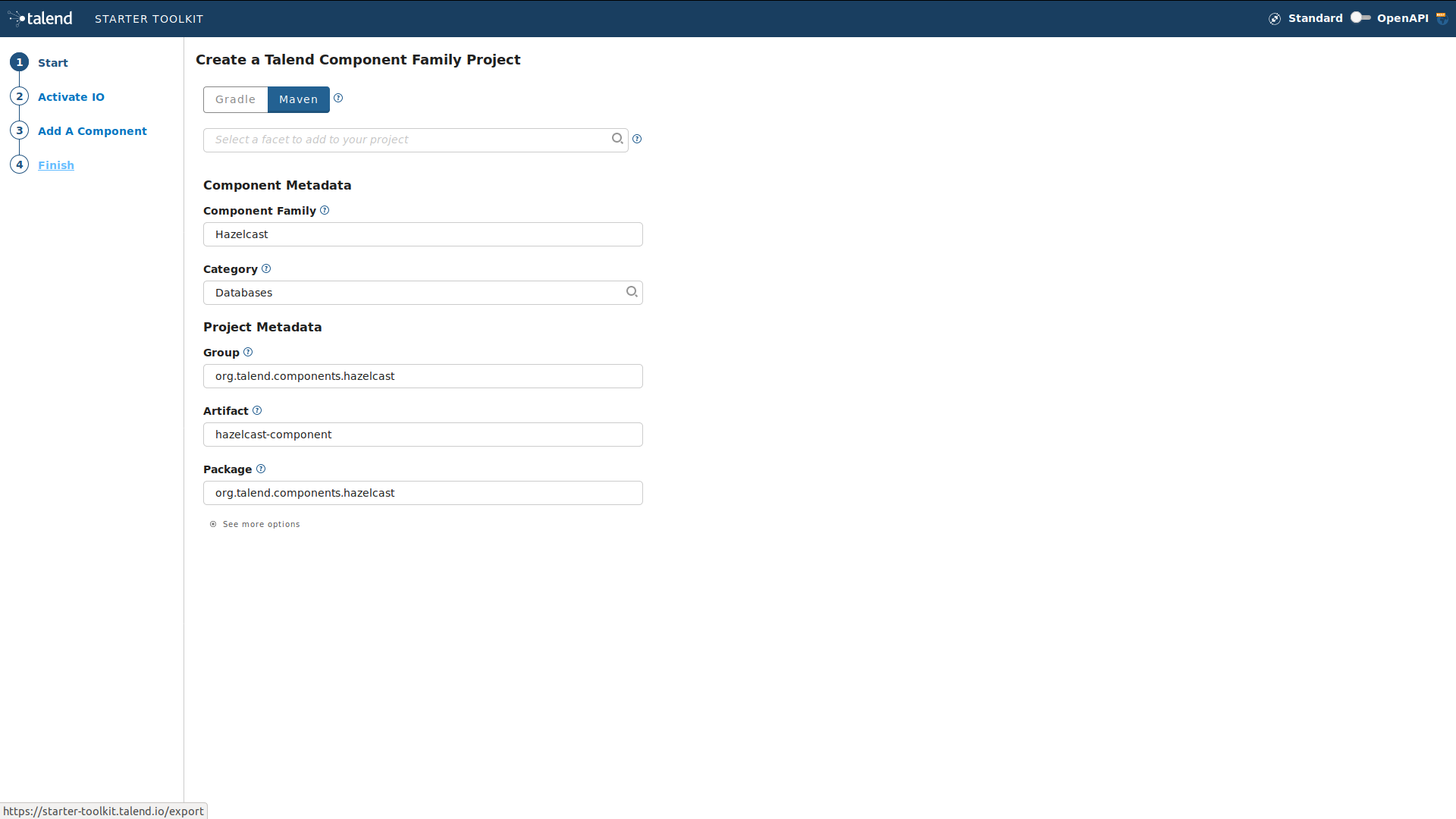

Configuring the project

Before being able to create components, you need to define the general settings of the project:

-

Create a folder on your local machine to store the resource files of the component you want to create. For example,

C:/my_components. -

Open the Starter in the web browser of your choice.

-

Select your build tool. This tutorial uses Maven.

-

Add any facet you need. For example, add the Talend Component Kit Testing facet to your project to automatically generate unit tests for the components created in the project.

-

Enter the Component Family of the components you want to develop in the project. This name must be a valid java name and is recommended to be capitalized, for example 'MySQL'.

Once you have implemented your components in the Studio, this name is displayed in the Palette to group all of the MySQL-related components you develop, and is also part of your component name. -

Select the Category of the components you want to create in the current project. As MySQL is a kind of database, select Databases in this tutorial.

This Databases category is used and displayed as the parent family of the MySQL group in the Palette of the Studio. -

Complete the project metadata by entering the Group, Artifact and Package.

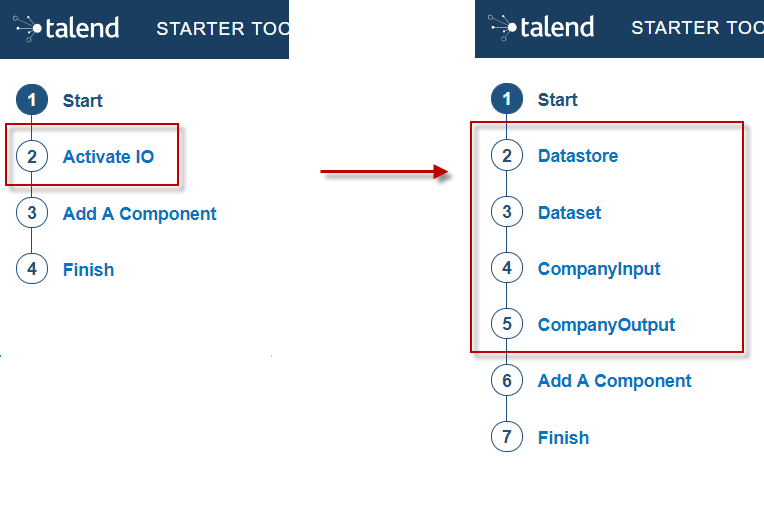

-

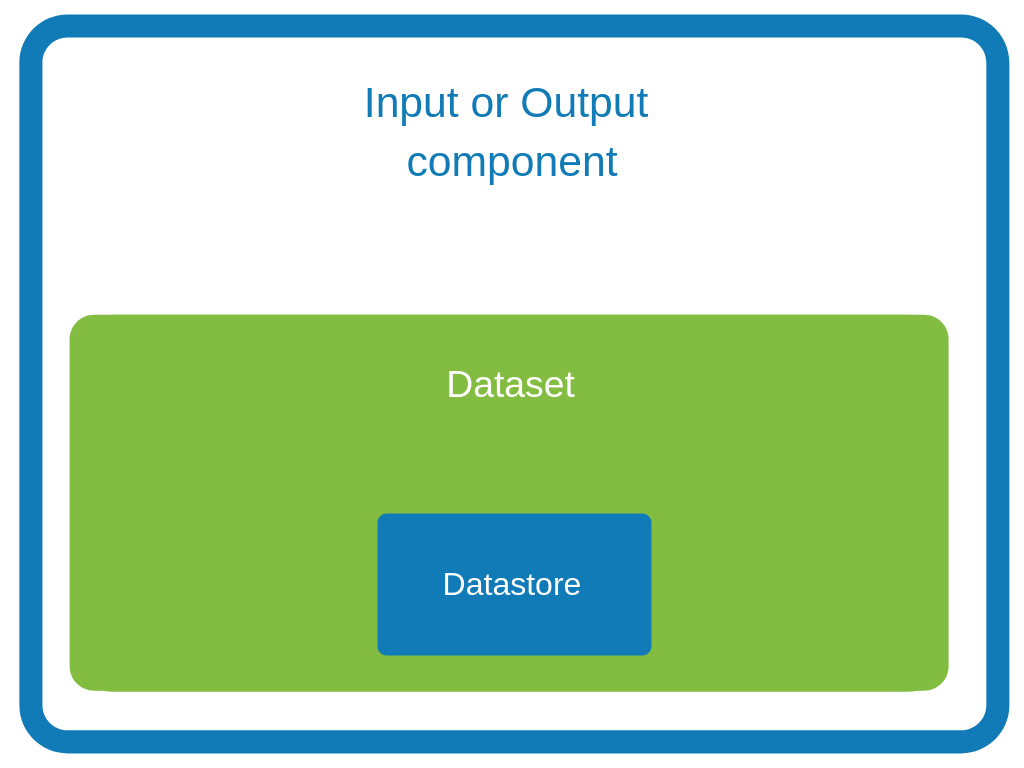

By default, you can only create processors. If you need to create Input or Output components, select Activate IO. By doing this:

-

Two new menu entries let you add datasets and datastores to your project, as they are required for input and output components.

Input and Output components without dataset (itself containing a datastore) will not pass the validation step when building the components. Learn more about datasets and datastores in this document. -

An Input component and an Output component are automatically added to your project and ready to be configured.

-

Components added to the project using Add A Component can now be processors, input or output components.

-

Defining a Datastore

A datastore represents the data needed by an input or output component to connect to a database.

When building a component, the validateDataSet validation checks that each input or output (processor without output branch) component uses a dataset and that this dataset has a datastore.

You can define one or several datastores if you have selected the Activate IO step.

-

Select Datastore. The list of datastores opens. By default, a datastore is already open but not configured. You can configure it or create a new one using Add new Datastore.

-

Specify the name of the datastore. Modify the default value to a meaningful name for your project.

This name must be a valid Java name as it will represent the datastore class in your project. It is a good practice to start it with an uppercase letter. -

Edit the datastore configuration. Parameter names must be valid Java names. Use lower case as much as possible. A typical configuration includes connection details to a database:

-

url

-

username

-

password.

-

-

Save the datastore configuration.

Defining a Dataset

A dataset represents the data coming from or sent to a database and needed by input and output components to operate.

The validateDataSet validation checks that each input or output (processor without output branch) component uses a dataset and that this dataset has a datastore.

You can define one or several datasets if you have selected the Activate IO step.

-

Select Dataset. The list of datasets opens. By default, a dataset is already open but not configured. You can configure it or create a new one using the Add new Dataset button.

-

Specify the name of the dataset. Modify the default value to a meaningful name for your project.

This name must be a valid Java name as it will represent the dataset class in your project. It is a good practice to start it with an uppercase letter. -

Edit the dataset configuration. Parameter names must be valid Java names. Use lower case as much as possible. A typical configuration includes details of the data to retrieve:

-

Datastore to use (that contains the connection details to the database)

-

table name

-

data

-

-

Save the dataset configuration.

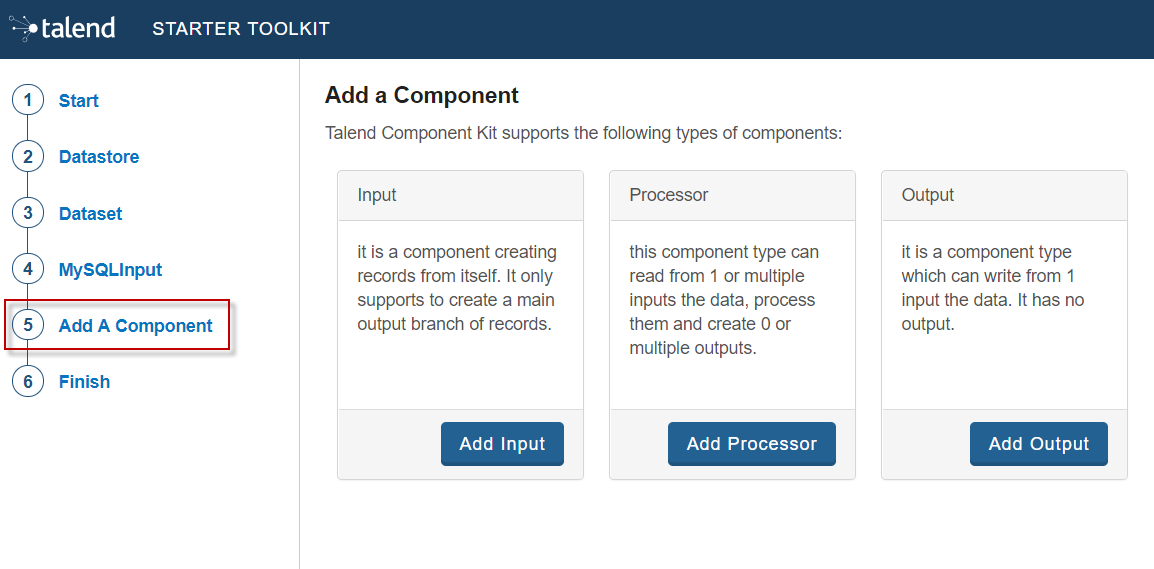

Creating an Input component

To create an input component, make sure you have selected Activate IO.

When clicking Add A Component in the Starter, a new step allows you to define a new component in your project.

The intent in this tutorial is to create an input component that connects to a MySQL database, executes a SQL query and gets the result.

-

Choose the component type. Input in this case.

-

Enter the component name. For example, MySQLInput.

-

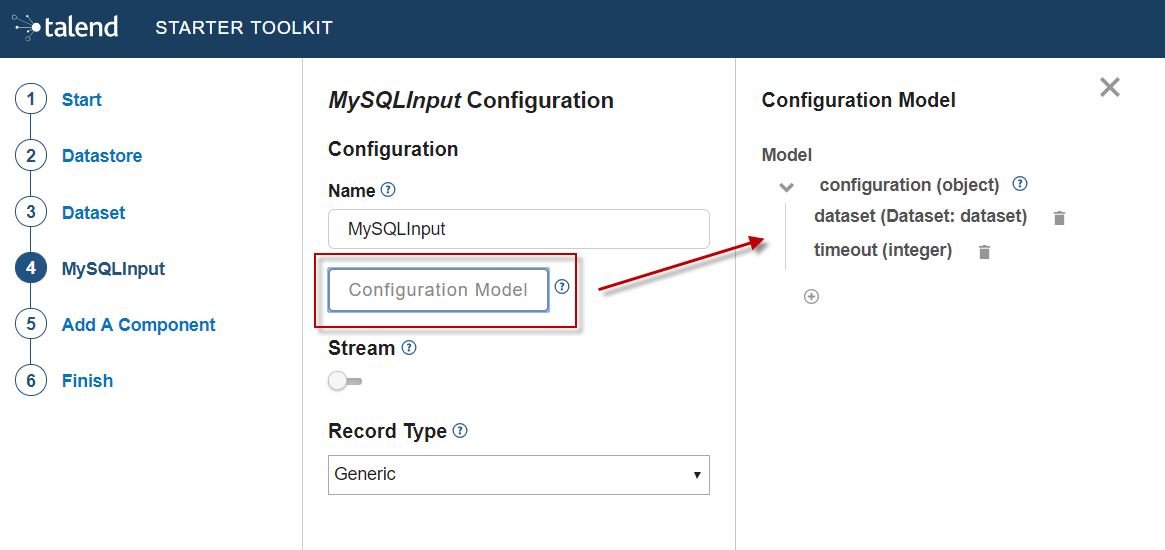

Click Configuration model. This button lets you specify the required configuration for the component. By default, a dataset is already specified.

-

For each parameter that you need to add, click the (+) button on the right panel. Enter the parameter name and choose its type then click the tick button to save the changes.

In this tutorial, to be able to execute a SQL query on the Input MySQL database, the configuration requires the following parameters:+ -

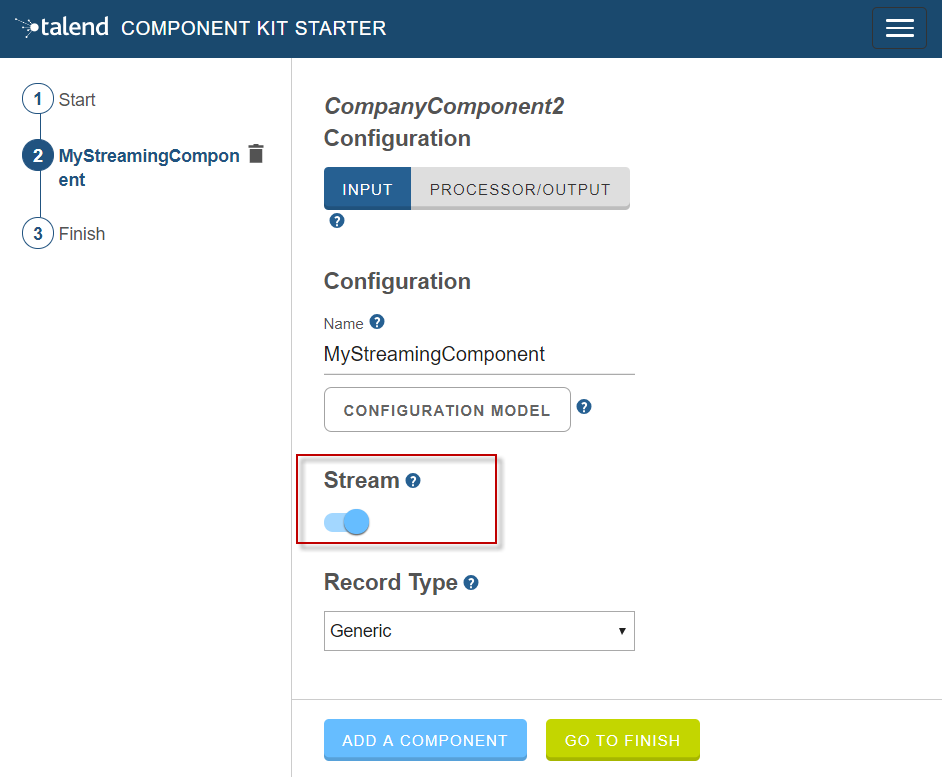

Specify whether the component issues a stream or not. In this tutorial, the MySQL input component created is an ordinary (non streaming) component. In this case, leave the Stream option disabled.

-

Select the Record Type generated by the component. In this tutorial, select Generic because the component is designed to generate records in the default

Recordformat.

You can also select Custom to define a POJO that represents your records.

Your input component is now defined. You can add another component or generate and download your project.

Creating a Processor component

When clicking Add A Component in the Starter, a new step allows you to define a new component in your project. The intent in this tutorial is to create a simple processor component that receives a record, logs it and returns it at it is.

|

If you did not select Activate IO, all new components you add to the project are processors by default. If you selected Activate IO, you can choose the component type. In this case, to create a Processor component, you have to manually add at least one output. |

-

If required, choose the component type: Processor in this case.

-

Enter the component name. For example, RecordLogger, as the processor created in this tutorial logs the records.

-

Specify the Configuration Model of the component. In this tutorial, the component doesn’t need any specific configuration. Skip this step.

-

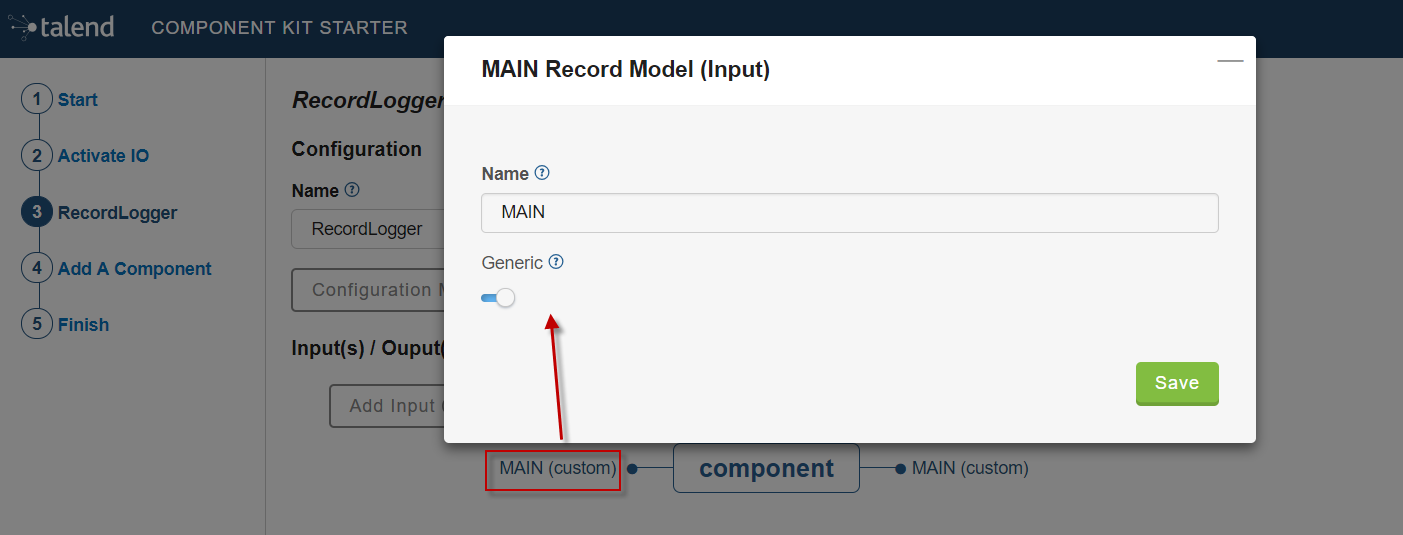

Define the Input(s) of the component. For each input that you need to define, click Add Input. In this tutorial, only one input is needed to receive the record to log.

-

Click the input name to access its configuration. You can change the name of the input and define its structure using a POJO. If you added several inputs, repeat this step for each one of them.

The input in this tutorial is a generic record. Enable the Generic option and click Save. -

Define the Output(s) of the component. For each output that you need to define, click Add Output. The first output must be named

MAIN. In this tutorial, only one generic output is needed to return the received record.

Outputs can be configured the same way as inputs (see previous steps).

You can define a reject output connection by naming itREJECT. This naming is used by Talend applications to automatically set the connection type to Reject.

Your processor component is now defined. You can add another component or generate and download your project.

Creating an Output component

To create an output component, make sure you have selected Activate IO.

When clicking Add A Component in the Starter, a new step allows you to define a new component in your project.

The intent in this tutorial is to create an output component that receives a record and inserts it into a MySQL database table.

| Output components are Processors without any output. In other words, the output is a processor that does not produce any records. |

-

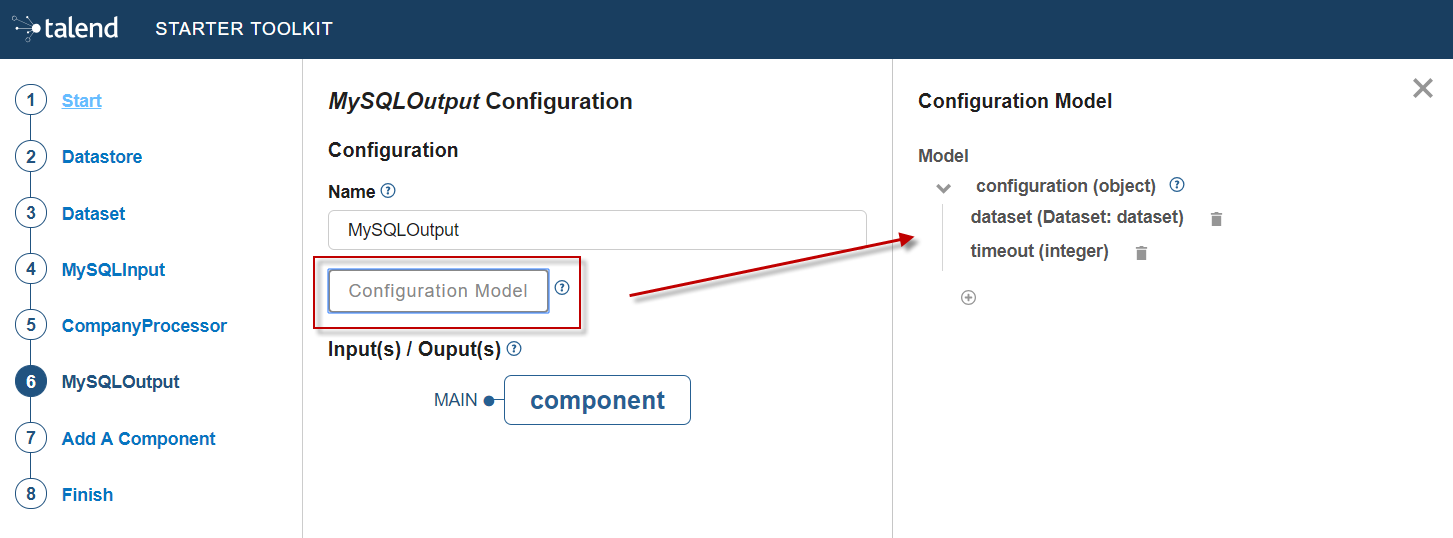

Choose the component type. Output in this case.

-

Enter the component name. For example, MySQLOutput.

-

Click Configuration Model. This button lets you specify the required configuration for the component. By default, a dataset is already specified.

-

For each parameter that you need to add, click the (+) button on the right panel. Enter the name and choose the type of the parameter, then click the tick button to save the changes.

In this tutorial, to be able to insert a record in the output MySQL database, the configuration requires the following parameters:+-

a dataset (which contains the datastore with the connection information)

-

a timeout parameter.

Closing the configuration panel on the right does not delete your configuration. However, refreshing the page resets the configuration.

-

-

Define the Input(s) of the component. For each input that you need to define, click Add Input. In this tutorial, only one input is needed.

-

Click the input name to access its configuration. You can change the name of the input and define its structure using a POJO. If you added several inputs, repeat this step for each one of them.

The input in this tutorial is a generic record. Enable the Generic option and click Save.

| Do not create any output because the component does not produce any record. This is the only difference between an output an a processor component. |

Your output component is now defined. You can add another component or generate and download your project.

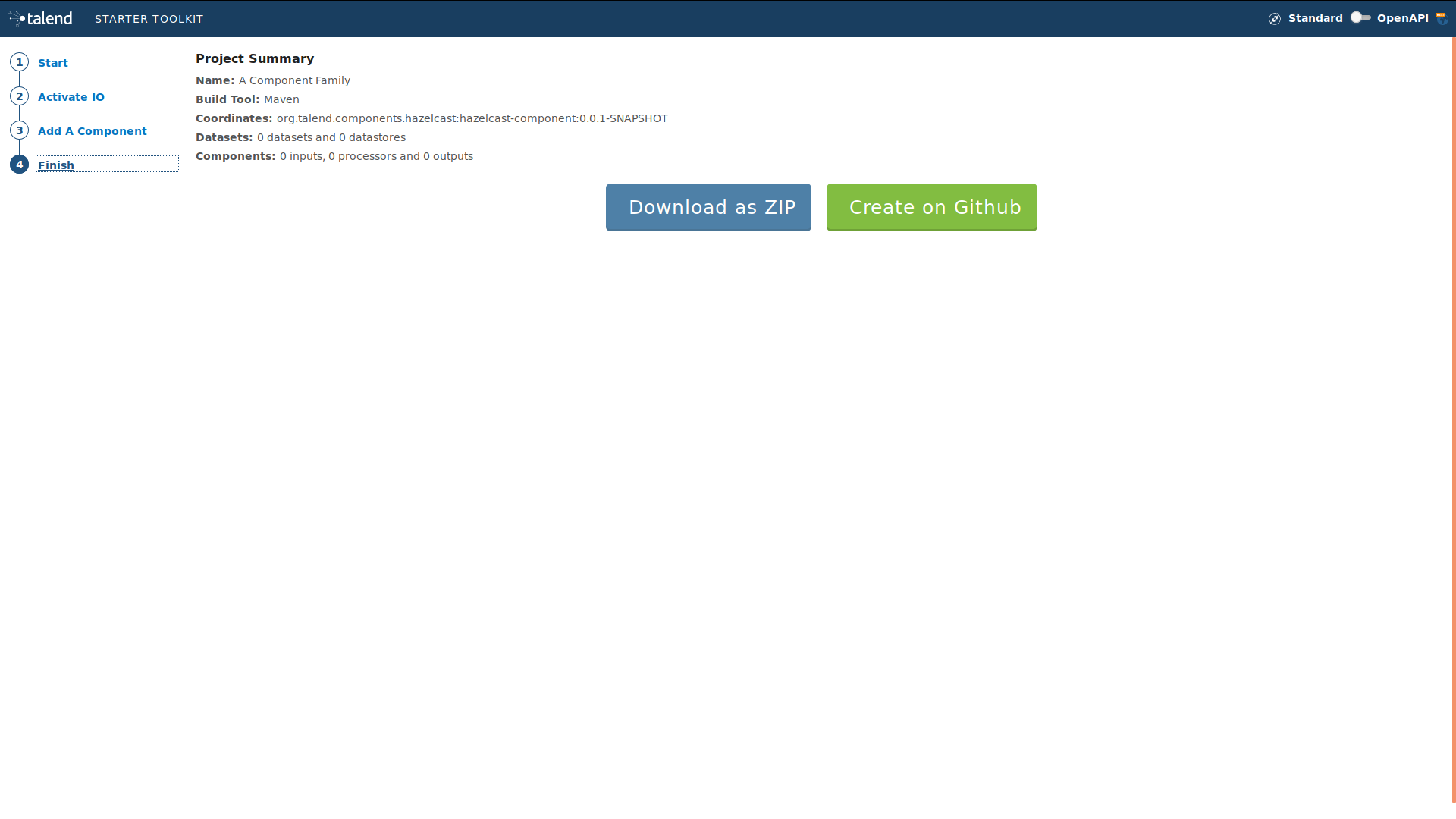

Generating and downloading the final project

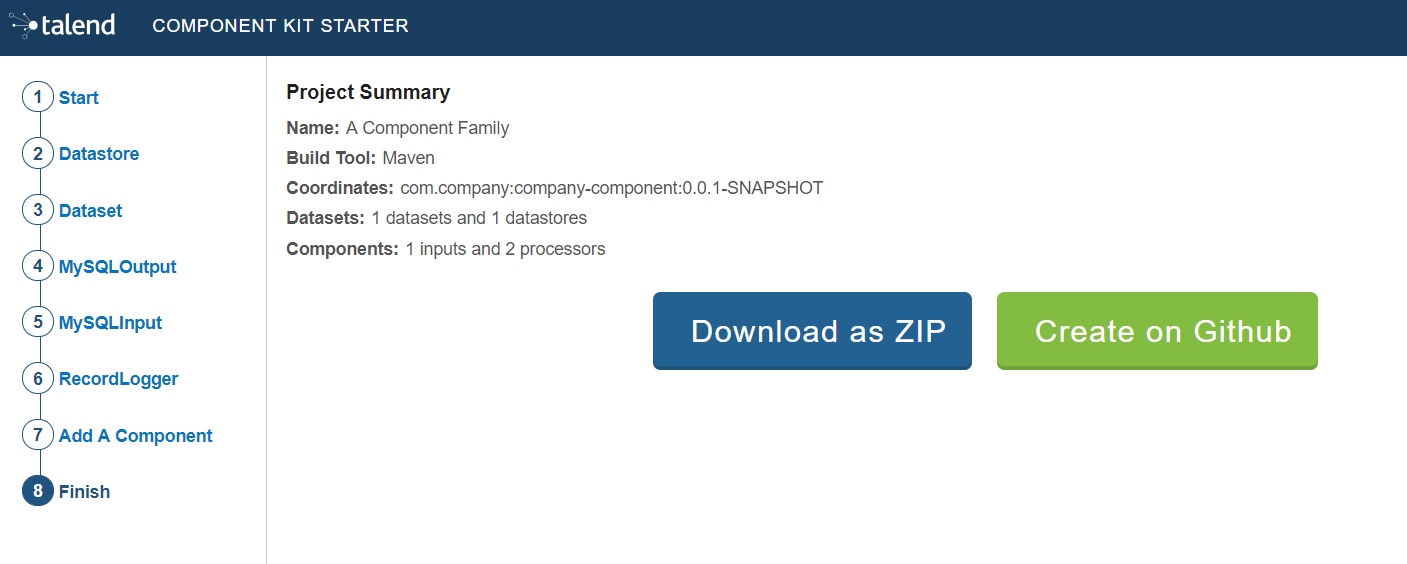

Once your project is configured and all the components you need are created, you can generate and download the final project. In this tutorial, the project was configured and three components of different types (input, processor and output) have been defined.

-

Click Finish on the left panel. You are redirected to a page that summarizes the project. On the left panel, you can also see all the components that you added to the project.

-

Generate the project using one of the two options available:

-

Download it locally as a ZIP file using the Download as ZIP button.

-

Create a GitHub repository and push the project to it using the Create on Github button.

-

In this tutorial, the project is downloaded to the local machine as a ZIP file.

Compiling and exploring the generated project files

Once the package is available on your machine, you can compile it using the build tool selected when configuring the project.

-

In the tutorial, Maven is the build tool selected for the project.

In the project directory, execute themvn packagecommand.

If you don’t have Maven installed on your machine, you can use the Maven wrapper provided in the generated project, by executing the./mvnw packagecommand.

The generated project code contains documentation that can guide and help you implementing the component logic. Import the project to your favorite IDE to start the implementation.

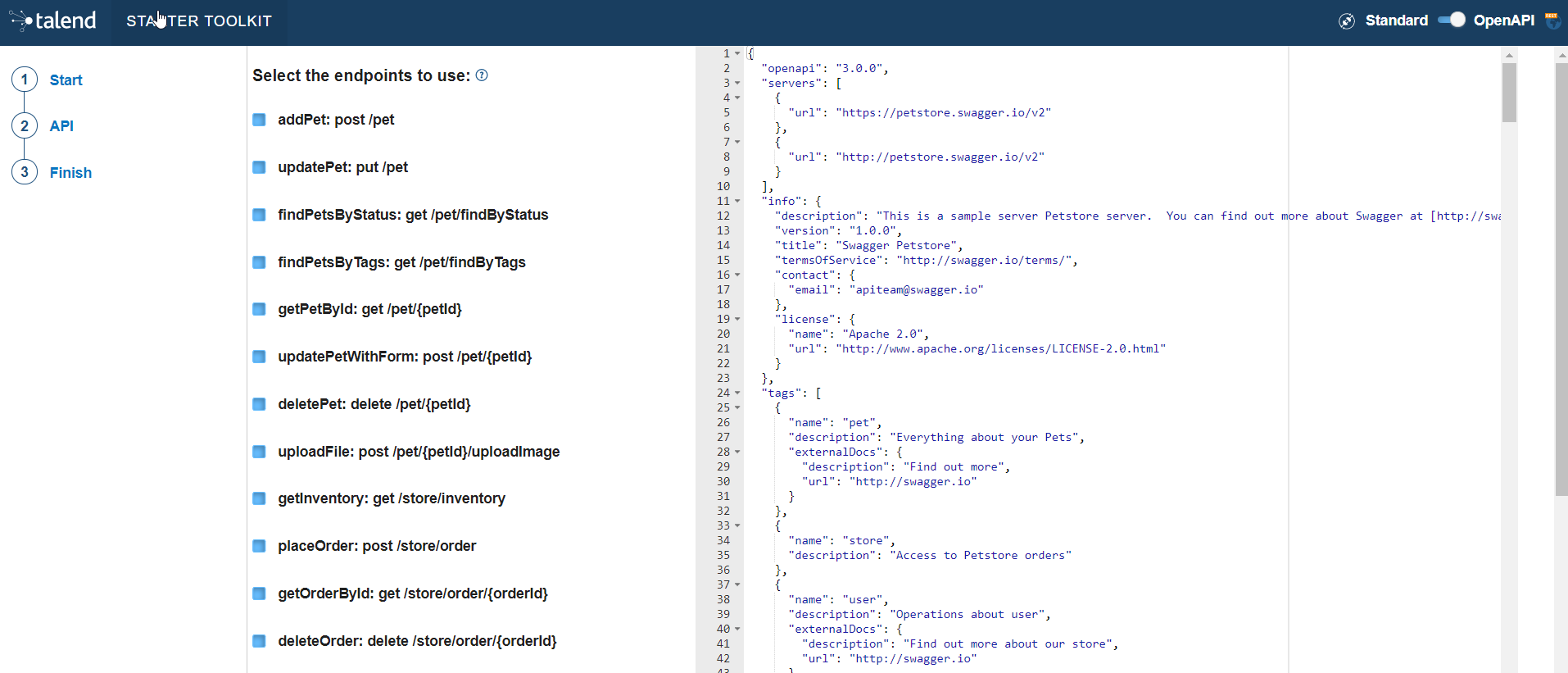

Generating a project using an OpenAPI JSON descriptor

The Component Kit Starter allows you to generate a component development project from an OpenAPI JSON descriptor.

-

Open the Starter in the web browser of your choice.

-

Enable the OpenAPI mode using the toggle in the header.

-

Go to the API menu.

-

Paste the OpenAPI JSON descriptor in the right part of the screen. All the described endpoints are detected.

-

Unselect the endpoints that you do not want to use in the future components. By default, all detected endpoints are selected.

-

Go to the Finish menu.

-

Download the project.

When exploring the project generated from an OpenAPI descriptor, you can notice the following elements:

-

sources

-

the API dataset

-

an HTTP client for the API

-

a connection folder containing the component configuration. By default, the configuration is only made of a simple datastore with a

baseUrlparameter.

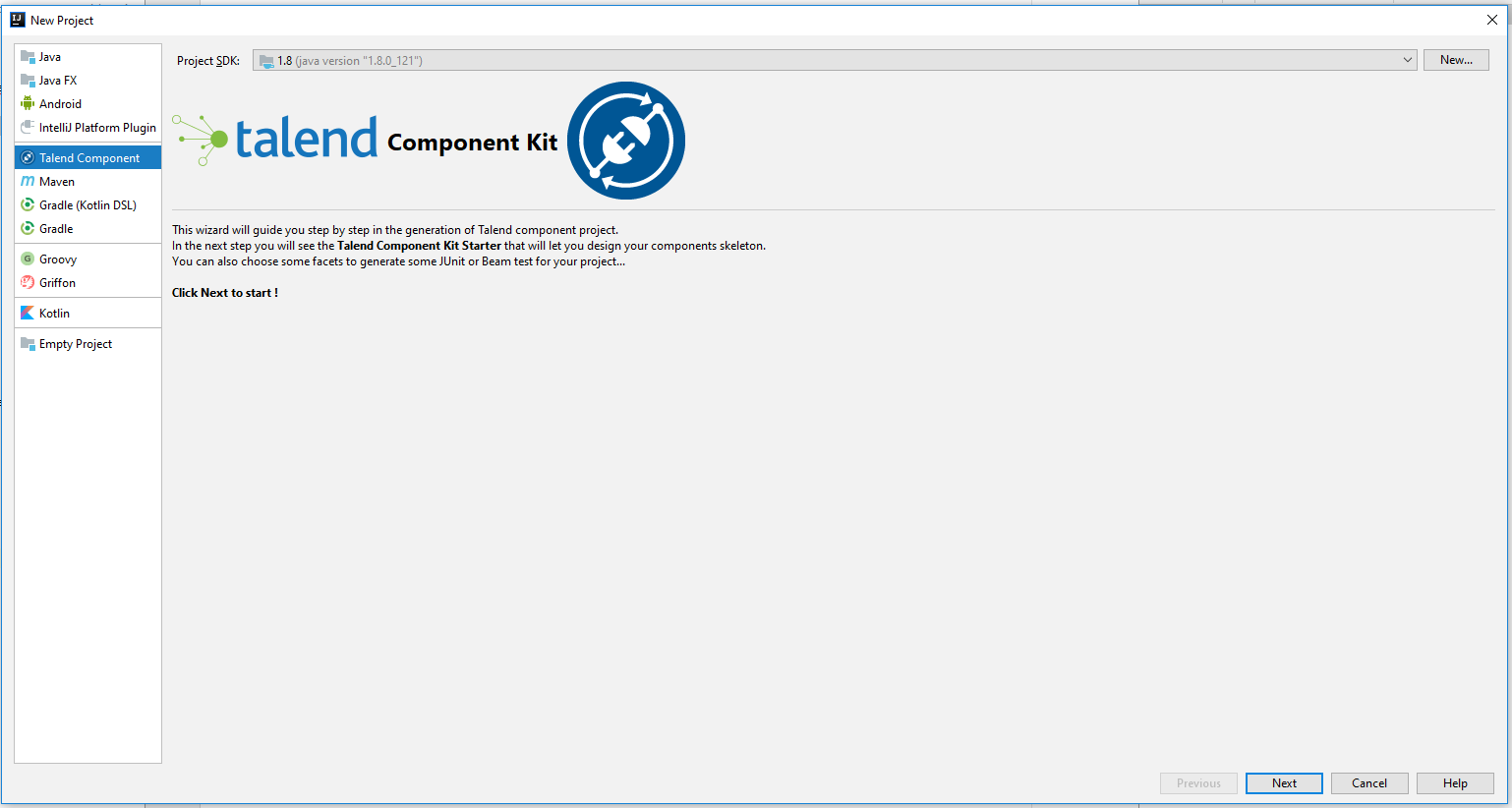

Generating a project using IntelliJ plugin

Once the plugin installed, you can generate a component project.

-

Select File > New > Project.

-

In the New Project wizard, choose Talend Component and click Next.

The plugin loads the component starter and lets you design your components. For more information about the Talend Component Kit starter, check this tutorial.

-

Once your project is configured, select Next, then click Finish.

The project is automatically imported into the IDEA using the build tool that you have chosen.

Implementing components

Once you have generated a project, you can start implementing the logic and layout of your components and iterate on it. Depending on the type of component you want to create, the logic implementation can differ. However, the layout and component metadata are defined the same way for all types of components in your project. The main steps are:

In some cases, you will require specific implementations to handle more advanced cases, such as:

You can also make certain configurations reusable across your project by defining services. Using your Java IDE along with a build tool supported by the framework, you can then compile your components to test and deploy them to Talend Studio or other Talend applications:

In any case, follow these best practices to ensure the components you develop are optimized.

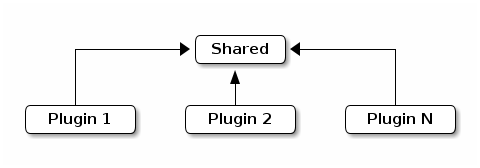

You can also learn more about component loading and plugins here:

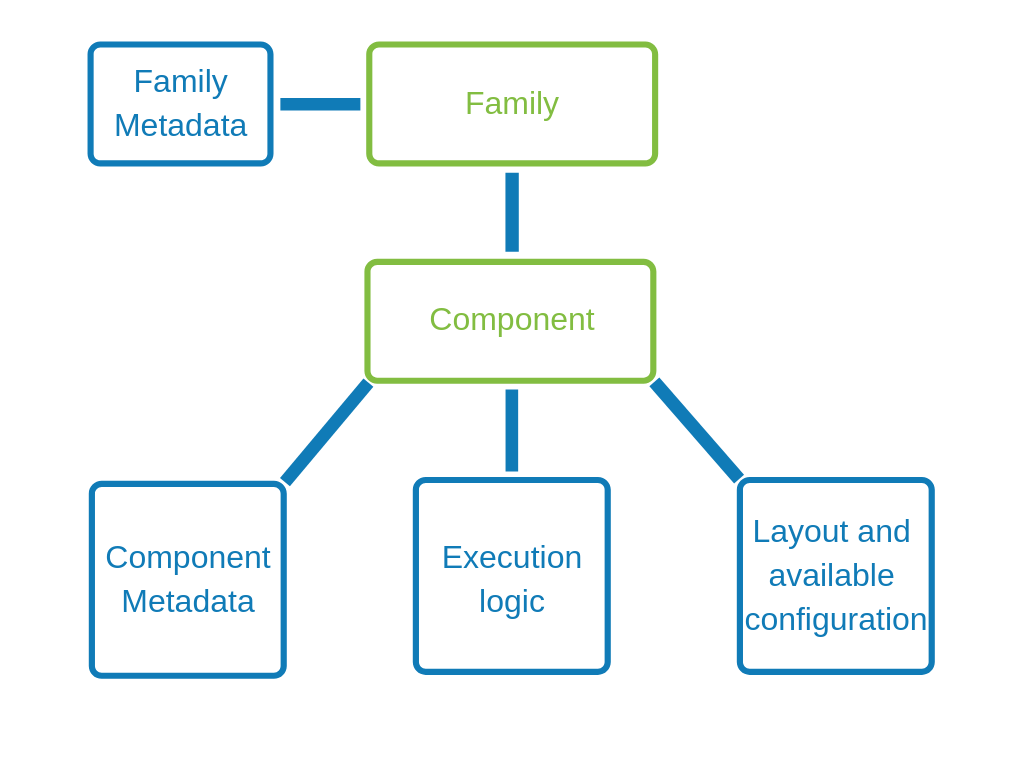

Registering components

Before implementing a component logic and configuration, you need to specify the family and the category it belongs to, the component type and name, as well as its name and a few other generic parameters. This set of metadata, and more particularly the family, categories and component type, is mandatory to recognize and load the component to Talend Studio or Cloud applications.

Some of these parameters are handled at the project generation using the starter, but can still be accessed and updated later on.

Component family and categories

The family and category of a component is automatically written in the package-info.java file of the component package, using the @Components annotation. By default, these parameters are already configured in this file when you import your project in your IDE. Their value correspond to what was defined during the project definition with the starter.

Multiple components can share the same family and category value, but the family + name pair must be unique for the system.

A component can belong to one family only and to one or several categories. If not specified, the category defaults to Misc.

The package-info.java file also defines the component family icon, which is different from the component icon. You can learn how to customize this icon in this section.

Here is a sample package-info.java:

@Components(name = "my_component_family", categories = "My Category")

package org.talend.sdk.component.sample;

import org.talend.sdk.component.api.component.Components;Another example with an existing component:

@Components(name = "Salesforce", categories = {"Business", "Cloud"})

package org.talend.sdk.component.sample;

import org.talend.sdk.component.api.component.Components;Component icon and version

Components can require metadata to be integrated in Talend Studio or Cloud platforms.

Metadata is set on the component class and belongs to the org.talend.sdk.component.api.component package.

When you generate your project and import it in your IDE, icon and version both come with a default value.

-

@Icon: Sets an icon key used to represent the component. You can use a custom key with the

custom()method but the icon may not be rendered properly. The icon defaults to Check.

Replace it with a custom icon, as described in this section. -

@Version: Sets the component version. 1 by default.

Learn how to manage different versions and migrations between your component versions in this section.

For example:

@Version(1)

@Icon(FILE_XML_O)

@PartitionMapper(name = "jaxbInput")

public class JaxbPartitionMapper implements Serializable {

// ...

}Defining a custom icon for a component or component family

Every component family and component needs to have a representative icon.

You have to define a custom icon as follows:

-

For the component family the icon is defined in the

package-info.javafile. -

For the component itself, you need to declare the icon in the component class.

Custom icons must comply with the following requirements:

-

Icons must be stored in the

src/main/resources/iconsfolder of the project. -

Icon file names need to match one of the following patterns:

IconName.svgorIconName_icon32.png. The latter will run in degraded mode in Talend Cloud. ReplaceIconNameby the name of your choice. -

The icon size for PNG must be 32x32. For SVG, the viewBox must be 16x16.

-

Icons must be squared, even for the SVG format.

@Icon(value = Icon.IconType.CUSTOM, custom = "IconName")|

Note that SVG icons are not supported by Talend Studio and can cause the deployment of the component to fail. If you aim at deploying a custom component to Talend Studio, specify PNG icons or use the Maven Ultimately, you can also remove SVG parameters from the |

Component extra metadatas

For any purpose, you can also add user defined metadatas to your component with the @Metadatas annotation.

Example:

@Processor(name = "my_processor")

@Metadatas({

@Metadata(key = "user::value0", value = "myValue0"),

@Metadata(key = "user::value1", value = "myValue1")

})

public class MyProcessor {

}You can also use a SPI implementing org.talend.sdk.component.spi.component.ComponentMetadataEnricher.

Defining datasets and datastores

Datasets and datastores are configuration types that define how and where to pull the data from. They are used at design time to create shared configurations that can be stored and used at runtime.

All connectors (input and output components) created using Talend Component Kit must reference a valid dataset. Each dataset must reference a datastore.

-

Datastore: The data you need to connect to the backend.

-

Dataset: A datastore coupled with the data you need to execute an action.

|

Make sure that:

These rules are enforced by the |

Defining a datastore

A datastore defines the information required to connect to a data source. For example, it can be made of:

-

a URL

-

a username

-

a password.

You can specify a datastore and its context of use (in which dataset, etc.) from the Component Kit Starter.

| Make sure to modelize the data your components are designed to handle before defining datasets and datastores in the Component Kit Starter. |

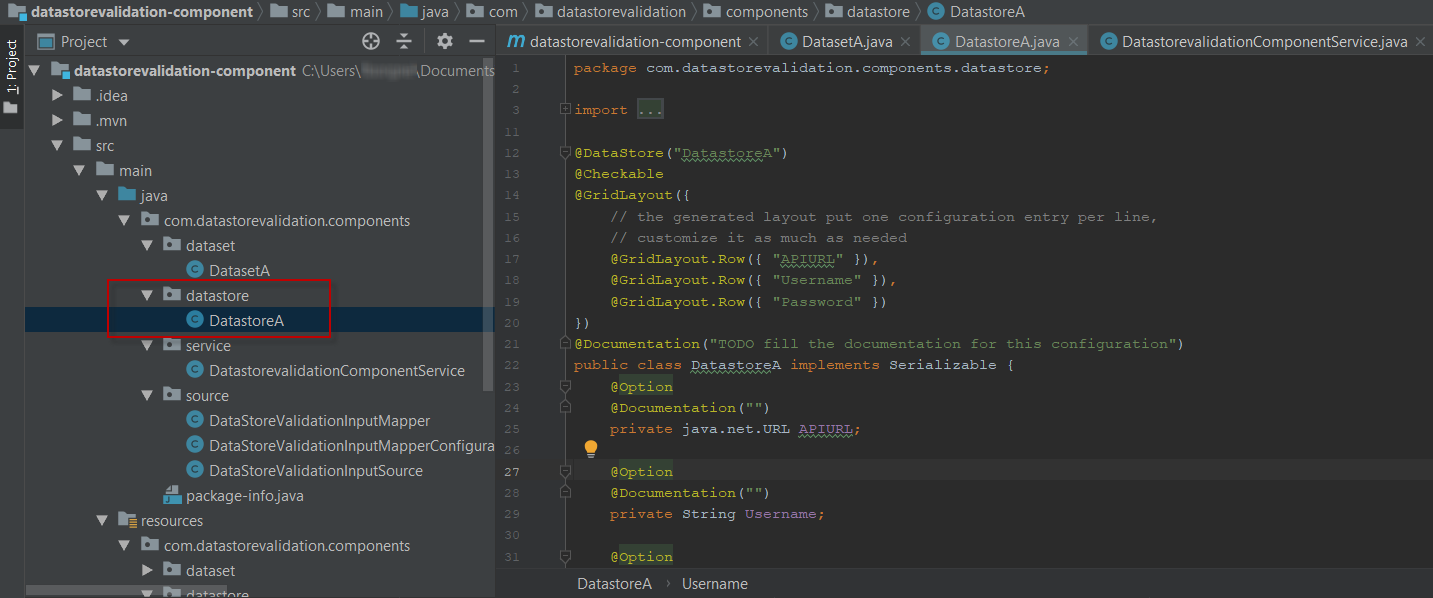

Once you generate and import the project into an IDE, you can find datastores under a specific datastore node.

Example of datastore:

package com.mycomponent.components.datastore;

@DataStore("DatastoreA") (1)

@GridLayout({ (2)

// The generated component layout will display one configuration entry per line.

// Customize it as much as needed.

@GridLayout.Row({ "apiurl" }),

@GridLayout.Row({ "username" }),

@GridLayout.Row({ "password" })

})

@Documentation("A Datastore made of an API URL, a username, and a password. The password is marked as Credential.") (3)

public class DatastoreA implements Serializable {

@Option

@Documentation("")

private String apiurl;

@Option

@Documentation("")

private String username;

@Option

@Credential

@Documentation("")

private String password;

public String getApiurl() {

return apiurl;

}

public DatastoreA setApiurl(String apiurl) {

this.apiurl = apiurl;

return this;

}

public String getUsername() {

return Username;

}

public DatastoreA setuUsername(String username) {

this.username = username;

return this;

}

public String getPassword() {

return password;

}

public DatastoreA setPassword(String password) {

this.password = password;

return this;

}

}| 1 | Identifying the class as a datastore and naming it. |

| 2 | Defining the layout of the datastore configuration. |

| 3 | Defining each element of the configuration: a URL, a username, and a password. Note that the password is also marked as a credential. |

Defining a dataset

A dataset represents the inbound data. It is generally made of:

-

A datastore that defines the connection information needed to access the data.

-

A query.

You can specify a dataset and its context of use (in which input and output component it is used) from the Component Kit Starter.

| Make sure to modelize the data your components are designed to handle before defining datasets and datastores in the Component Kit Starter. |

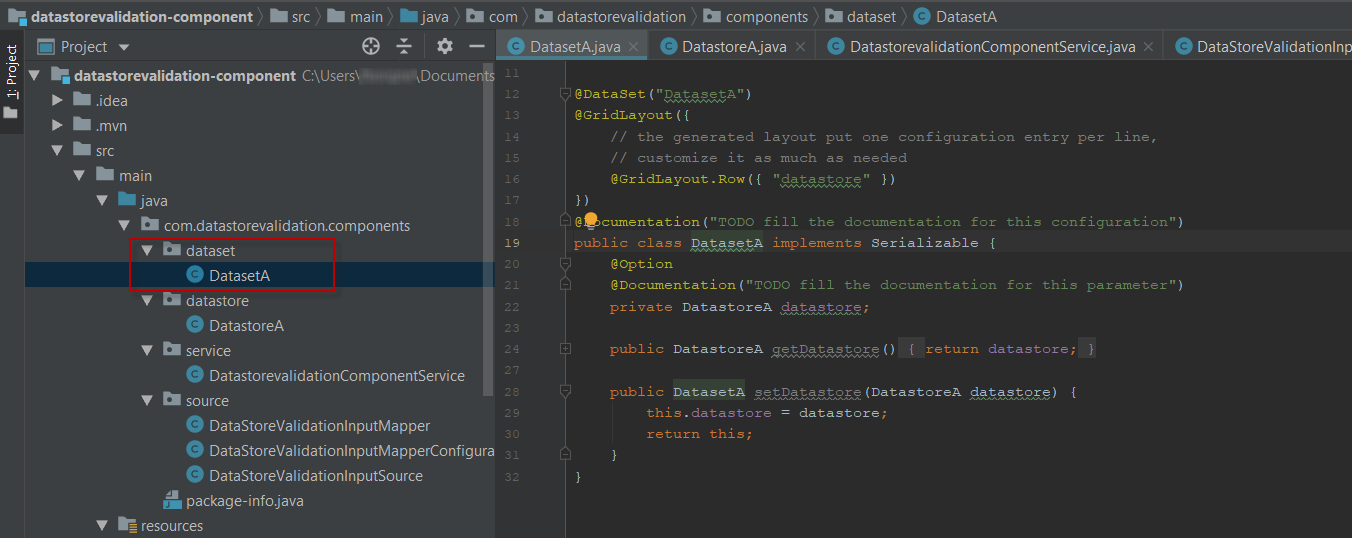

Once you generate and import the project into an IDE, you can find datasets under a specific dataset node.

Example of dataset referencing the datastore shown above:

package com.datastorevalidation.components.dataset;

@DataSet("DatasetA") (1)

@GridLayout({

// The generated component layout will display one configuration entry per line.

// Customize it as much as needed.

@GridLayout.Row({ "datastore" })

})

@Documentation("A Dataset configuration containing a simple datastore") (2)

public class DatasetA implements Serializable {

@Option

@Documentation("Datastore")

private DatastoreA datastore;

public DatastoreA getDatastore() {

return datastore;

}

public DatasetA setDatastore(DatastoreA datastore) {

this.datastore = datastore;

return this;

}

}| 1 | Identifying the class as a dataset and naming it. |

| 2 | Implementing the dataset and referencing DatastoreA as the datastore to use. |

Internationalizing datasets and datastores

The display name of each dataset and datastore must be referenced in the message.properties file of the family package.

The key for dataset and datastore display names follows a defined pattern: ${family}.${configurationType}.${name}._displayName. For example:

ComponentFamily.dataset.DatasetA._displayName=Dataset A

ComponentFamily.datastore.DatastoreA._displayName=Datastore AThese keys are automatically added for datasets and datastores defined from the Component Kit Starter.

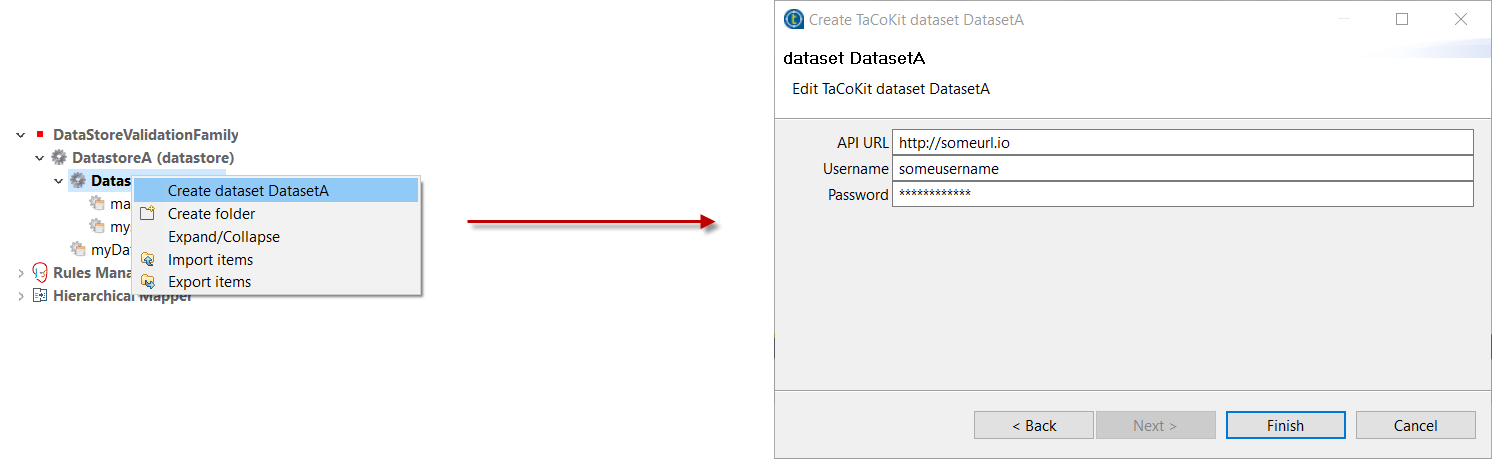

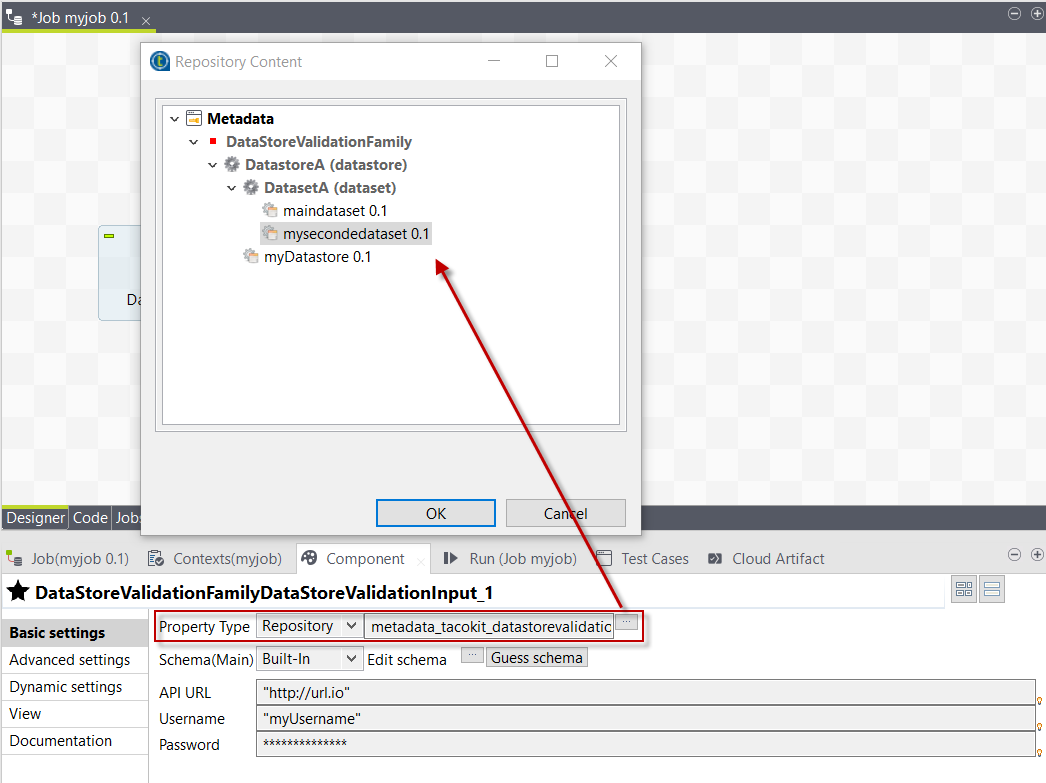

Reusing datasets and datastores in Talend Studio

When deploying a component or set of components that include datasets and datastores to Talend Studio, a new node is created under Metadata. This node has the name of the component family that was deployed.

It allows users to create reusable configurations for datastores and datasets.

With predefined datasets and datastores, users can then quickly fill the component configuration in their jobs. They can do so by selecting Repository as Property Type and by browsing to the predefined dataset or datastore.

How to create a reusable connection in Studio

Studio will generate connection and close components auto for reusing connection function in input and output components, just need to do like this example:

@Service

public class SomeService {

@CreateConnection

public Object createConn(@Option("configuration") SomeDataStore dataStore) throws ComponentException {

Object connection = null;

//get conn object by dataStore

return conn;

}

@CloseConnection

public CloseConnectionObject closeConn() {

return new CloseConnectionObject() {

public boolean close() throws ComponentException {

Object connection = this.getConnection();

//do close action

return true;

}

};

}

}Then the runtime mapper and processor only need to use @Connection to get the connection like this:

@Version(1)

@Icon(value = Icon.IconType.CUSTOM, custom = "SomeInput")

@PartitionMapper(name = "SomeInput")

@Documentation("the doc")

public class SomeInputMapper implements Serializable {

@Connection

SomeConnection conn;

}How does the component server interact with datasets and datastores

The component server scans all configuration types and returns a configuration type index. This index can be used for the integration into the targeted platforms (Studio, web applications, and so on).

Dataset

Mark a model (complex object) as being a dataset.

-

API: @org.talend.sdk.component.api.configuration.type.DataSet

-

Sample:

{

"tcomp::configurationtype::name":"test",

"tcomp::configurationtype::type":"dataset"

}Datastore

Mark a model (complex object) as being a datastore (connection to a backend).

-

API: @org.talend.sdk.component.api.configuration.type.DataStore

-

Sample:

{

"tcomp::configurationtype::name":"test",

"tcomp::configurationtype::type":"datastore"

}DatasetDiscovery

Mark a model (complex object) as being a dataset discovery configuration.

-

API: @org.talend.sdk.component.api.configuration.type.DatasetDiscovery

-

Sample:

{

"tcomp::configurationtype::name":"test",

"tcomp::configurationtype::type":"datasetDiscovery"

}DynamicDependenciesConfiguration

Mark a model (complex object) as being the configuration expected to compute dynamic dependencies.

-

API: @org.talend.sdk.component.api.configuration.type.DynamicDependenciesConfiguration

-

Sample:

{

"tcomp::configurationtype::name":"test",

"tcomp::configurationtype::type":"dynamicDependenciesConfiguration"

}Checkpoint

Mark a model (complex object) as being a checkpoint configuration and state.

-

API: @org.talend.sdk.component.api.input.checkpoint.Checkpoint

-

Sample:

{

"tcomp::configurationtype::name":"test",

"tcomp::configurationtype::type":"checkpoint"

}| The component family associated with a configuration type (datastore/dataset) is always the one related to the component using that configuration. |

The configuration type index is represented as a flat tree that contains all the configuration types, which themselves are represented as nodes and indexed by ID.

Every node can point to other nodes. This relation is represented as an array of edges that provides the child IDs.

As an illustration, a configuration type index for the example above can be defined as follows:

{nodes: {

"idForDstore": { datastore:"datastore data", edges:[id:"idForDset"] },

"idForDset": { dataset:"dataset data" }

}

}Defining an input component logic

Input components are the components generally placed at the beginning of a Talend job. They are in charge of retrieving the data that will later be processed in the job.

An input component is primarily made of three distinct logics:

-

The execution logic of the component itself, defined through a partition mapper.

-

The configurable part of the component, defined through the mapper configuration.

-

The source logic defined through a producer.

Before implementing the component logic and defining its layout and configurable fields, make sure you have specified its basic metadata, as detailed in this document.

Defining a partition mapper

What is a partition mapper

A Partition Mapper (PartitionMapper) is a component able to split itself to make the execution more efficient.

This concept is borrowed from big data and useful in this context only (BEAM executions).

The idea is to divide the work before executing it in order to reduce the overall execution time.

The process is the following:

-

The size of the data you work on is estimated. This part can be heuristic and not very precise.

-

From that size, the execution engine (runner for Beam) requests the mapper to split itself in N mappers with a subset of the overall work.

-

The leaf (final) mapper is used as a

Producer(actual reader) factory.

This kind of component must be Serializable to be distributable.

|

Implementing a partition mapper

A partition mapper requires three methods marked with specific annotations:

-

@Assessorfor the evaluating method -

@Splitfor the dividing method -

@Emitterfor theProducerfactory

@Assessor

The Assessor method returns the estimated size of the data related to the component (depending its configuration).

It must return a Number and must not take any parameter.

For example:

@Assessor

public long estimateDataSetByteSize() {

return ....;

}@Split

The Split method returns a collection of partition mappers and can take optionally a @PartitionSize long value as parameter, which is the requested size of the dataset per sub partition mapper.

For example:

@Split

public List<MyMapper> split(@PartitionSize final long desiredSize) {

return ....;

}Defining the producer method

The Producer defines the source logic of an input component. It handles the interaction with a physical source and produces input data for the processing flow.

A producer must have a @Producer method without any parameter. It is triggered by the @Emitter method of the partition mapper and can return any data. It is defined in the <component_name>Source.java file:

@Producer

public MyData produces() {

return ...;

}Defining a processor or an output component logic

Processors and output components are the components in charge of reading, processing and transforming data in a Talend job, as well as passing it to its required destination.

Before implementing the component logic and defining its layout and configurable fields, make sure you have specified its basic metadata, as detailed in this document.

Defining a processor

What is a processor

A Processor is a component that converts incoming data to a different model.

A processor must have a method decorated with @ElementListener taking an incoming data and returning the processed data:

@ElementListener

public MyNewData map(final MyData data) {

return ...;

}Processors must be Serializable because they are distributed components.

If you just need to access data on a map-based ruleset, you can use Record or JsonObject as parameter type.

From there, Talend Component Kit wraps the data to allow you to access it as a map. The parameter type is not enforced.

This means that if you know that you will get a SuperCustomDto bean, then you can use it as parameter type. But for generic components that are reusable in any chain, it is highly encouraged to use Record until you have an evaluation language-based processor that has its own way to access components.

For example:

@ElementListener

public MyNewData map(final Record incomingData) {

String name = incomingData.getString("name");

int name = incomingData.getInt("age");

return ...;

}

// equivalent to (using POJO subclassing)

public class Person {

private String age;

private int age;

// getters/setters

}

@ElementListener

public MyNewData map(final Person person) {

String name = person.getName();

int age = person.getAge();

return ...;

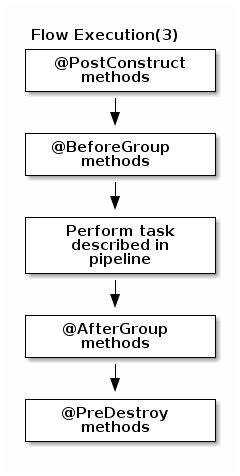

}A processor also supports @BeforeGroup and @AfterGroup methods, which must not have any parameter and return void values. Any other result would be ignored.

These methods are used by the runtime to mark a chunk of the data in a way which is estimated good for the execution flow size.

Because the size is estimated, the size of a group can vary. It is even possible to have groups of size 1.

|

It is recommended to batch records, for performance reasons:

@BeforeGroup

public void initBatch() {

// ...

}

@AfterGroup

public void endBatch() {

// ...

}You can optimize the data batch processing by using the maxBatchSize parameter. This parameter is automatically implemented on the component when it is deployed to a Talend application. Only the logic needs to be implemented. You can however customize its value setting in your LocalConfiguration the property _maxBatchSize.value - for the family - or ${component simple class name}._maxBatchSize.value - for a particular component, otherwise its default will be 1000. If you replace value by active, you can also configure if this feature is enabled or not. This is useful when you don’t want to use it at all. Learn how to implement chunking/bulking in this document.

Defining output connections

In some cases, you may need to split the output of a processor in two or more connections. A common example is to have "main" and "reject" output connections where parts of the incoming data are passed to a specific bucket for later processing.

Talend Component Kit supports two types of output connections: Flow and Reject.

-

Flow is the main and standard output connection.

-

The Reject connection handles records rejected during the processing. A component can only have one reject connection, if any. Its name must be

REJECTto be processed correctly in Talend applications.

| You can also define the different output connections of your component in the Starter. |

To define an output connection, you can use @Output as replacement of the returned value in the @ElementListener:

@ElementListener

public void map(final MyData data, @Output final OutputEmitter<MyNewData> output) {

output.emit(createNewData(data));

}Alternatively, you can pass a string that represents the new branch:

@ElementListener

public void map(final MyData data,

@Output final OutputEmitter<MyNewData> main,

@Output("REJECT") final OutputEmitter<MyNewDataWithError> rejected) {

if (isRejected(data)) {

rejected.emit(createNewData(data));

} else {

main.emit(createNewData(data));

}

}

// or

@ElementListener

public MyNewData map(final MyData data,

@Output("REJECT") final OutputEmitter<MyNewDataWithError> rejected) {

if (isSuspicious(data)) {

rejected.emit(createNewData(data));

return createNewData(data); // in this case the processing continues but notifies another channel

}

return createNewData(data);

}Defining conditional outputs flows

Processors @ElementListener methods can declare several output flows. During the design phase, all output flows are typically available. However, in some cases, we may want to disable certain flows based on the user’s configuration settings. In such instances, a service will be called to determine the available output flows. (Currently, only Studio supports this feature.)

-

A processor without @ConditionalOutputFlows keep the current behavior. All declared flows are visible at design time

-

A processor with @ConditionalOutputFlows has its output flows list conditioned by its configuration

-

The @ConditionalOutputFlows has one parameter: the name of a @AvailableOutputFlows service: @ConditionalOutputFlows("the_name")

-

Each processor in a same TCK container can use a different service to retrieve the list of available output flows

-

So several services can be annotated with @AvailableOutputFlows but their name must be different @AvailableOutputFlows("the_name"), @AvailableOutputFlow("another_name"), …

-

-

-

The return type of a @AvailableOutputFlows service is a List<String>, the build should fail if not.

@Processor(name = "Processor")

@ConditionalOutputFlows("my_service_that_return_active_flows")

public class MyProcessor implements Serializable {

@ElementListener

public void process(@Input final Record input,

@Output final OutputEmitter<Record> main,

@Output("second_flow") final OutputEmitter<Record> second,

@Output("third_flow") final OutputEmitter<Record> third) {

[.........]

}

}

@AvailableOutputFlows("my_service_that_return_active_flows")

//After the @Option, must follow ("configuration")

public Collection<String> getAvailableFlows(@Option("configuration") Config config) {

final List<String> flows = Arrays.asList("default", "second_flow");

if (config.isABoolean()) {

flows.add("third_flow");

}

return flows;

}Defining multiple inputs

Having multiple inputs is similar to having multiple outputs, except that an OutputEmitter wrapper is not needed:

@ElementListener

public MyNewData map(@Input final MyData data, @Input("input2") final MyData2 data2) {

return createNewData(data1, data2);

}@Input takes the input name as parameter. If no name is set, it defaults to the "main (default)" input branch. It is recommended to use the default branch when possible and to avoid naming branches according to the component semantic.

Implementing batch processing

What is batch processing

Batch processing refers to the way execution environments process batches of data handled by a component using a grouping mechanism.

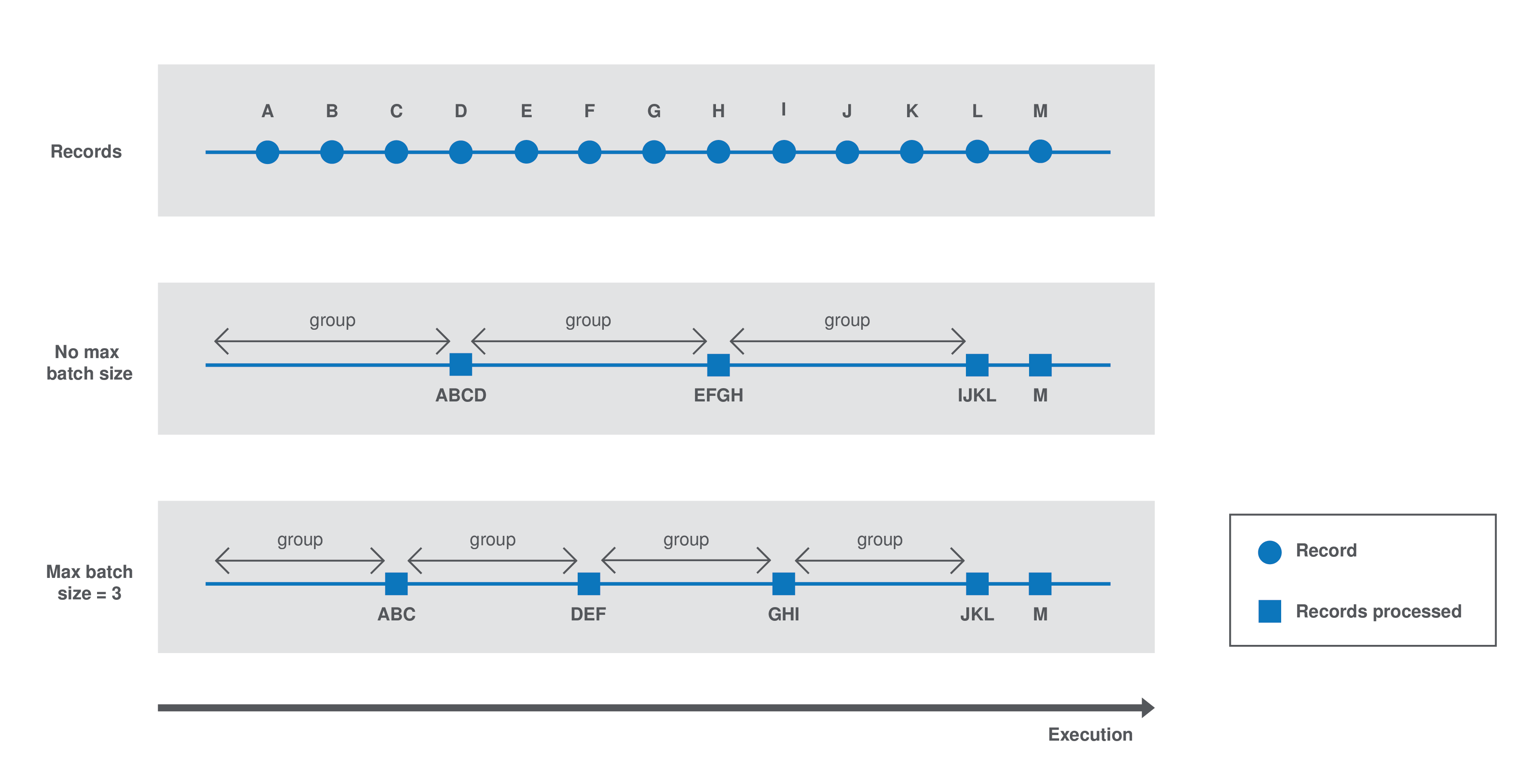

By default, the execution environment of a component automatically decides how to process groups of records and estimates an optimal group size depending on the system capacity. With this default behavior, the size of each group could sometimes be optimized for the system to handle the load more effectively or to match business requirements.

For example, real-time or near real-time processing needs often imply processing smaller batches of data, but more often. On the other hand, a one-time processing without business contraints is more effectively handled with a batch size based on the system capacity.

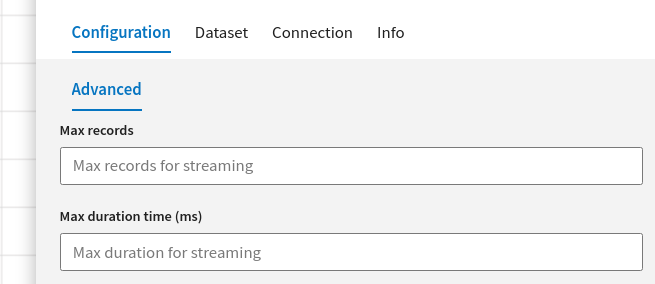

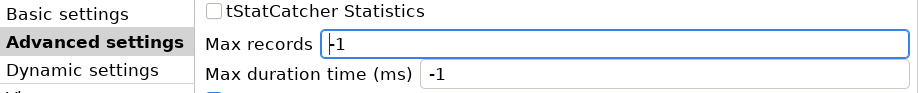

Final users of a component developed with the Talend Component Kit that integrates the batch processing logic described in this document can override this automatic size. To do that, a maxBatchSize option is available in the component settings and allows to set the maximum size of each group of data to process.

A component processes batch data as follows:

-

Case 1 - No

maxBatchSizeis specified in the component configuration. The execution environment estimates a group size of 4. Records are processed by groups of 4. -

Case 2 - The runtime estimates a group size of 4 but a

maxBatchSizeof 3 is specified in the component configuration. The system adapts the group size to 3. Records are processed by groups of 3.

Batch processing implementation logic

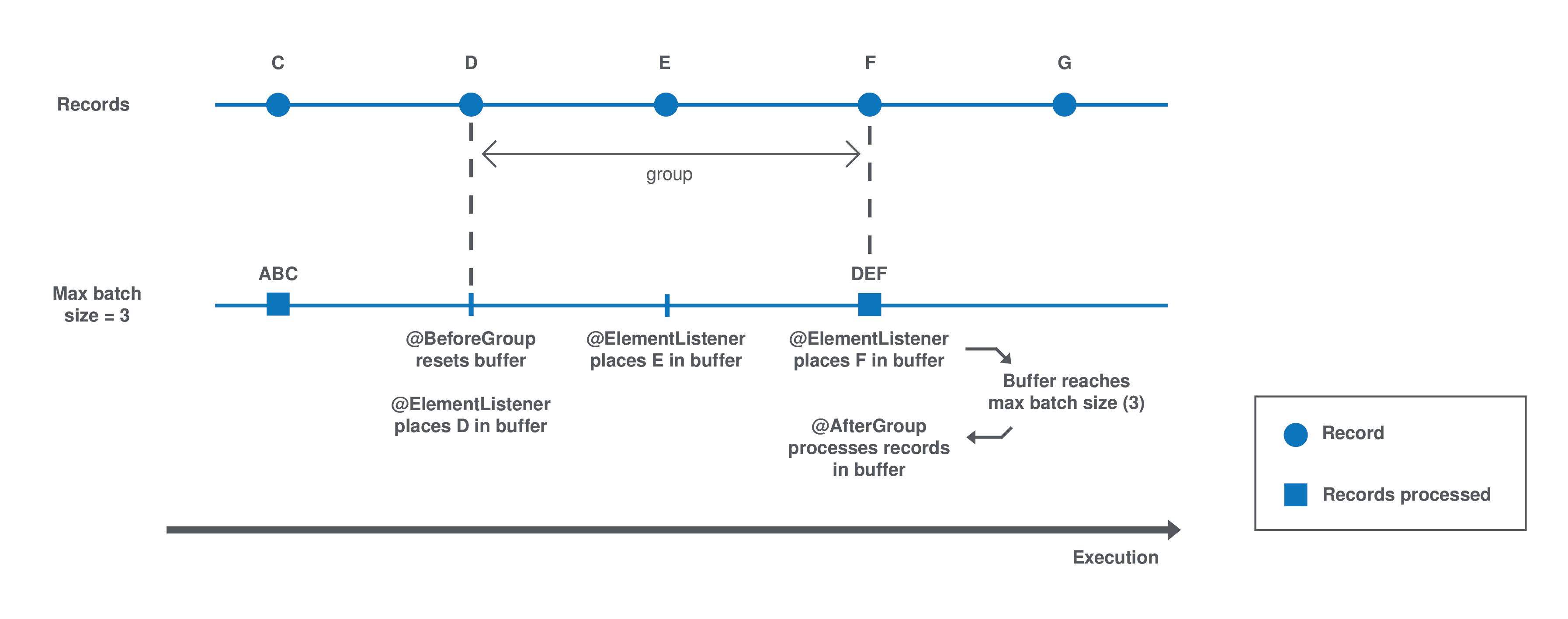

Batch processing relies on the sequence of three methods: @BeforeGroup, @ElementListener, @AfterGroup, that you can customize to your needs as a component Developer.

| The group size automatic estimation logic is automatically implemented when a component is deployed to a Talend application. |

Each group is processed as follows until there is no record left:

-

The

@BeforeGroupmethod resets a record buffer at the beginning of each group. -

The records of the group are assessed one by one and placed in the buffer as follows: The

@ElementListenermethod tests if the buffer size is greater or equal to the definedmaxBatchSize. If it is, the records are processed. If not, then the current record is buffered. -

The previous step happens for all records of the group. Then the

@AfterGroupmethod tests if the buffer is empty.

You can define the following logic in the processor configuration:

import java.io.Serializable;

import java.util.ArrayList;

import java.util.Collection;

import javax.json.JsonObject;

import org.talend.sdk.component.api.processor.AfterGroup;

import org.talend.sdk.component.api.processor.BeforeGroup;

import org.talend.sdk.component.api.processor.ElementListener;

import org.talend.sdk.component.api.processor.Processor;

@Processor(name = "BulkOutputDemo")

public class BulkProcessor implements Serializable {

private Collection<JsonObject> buffer;

@BeforeGroup

public void begin() {

buffer = new ArrayList<>();

}

@ElementListener

public void bufferize(final JsonObject object) {

buffer.add(object);

}

@AfterGroup

public void commit() {

// saves buffered records at once (bulk)

}

}You can also use the condensed syntax for this kind of processor:

@Processor(name = "BulkOutputDemo")

public class BulkProcessor implements Serializable {

@AfterGroup

public void commit(final Collection<Record> records) {

// saves records

}

}When using the @aftergroup feature, it can be helpful to determine if it’s the last call (last group). You can now annotate a Boolean with @lastgroup as a parameter of the method. This Boolean will be set to true for the final call, which can be useful for performing additional actions once all records have been processed.

For example, here is a scenario inspired by Snowflake:

Batches of records are staged within each @aftergroup call. Once all data has been staged, a final #commitBulkLoad call is executed. This action must be performed after all data has been staged. This process returns all rejected data, which is then transformed into TCK records and sent to the 'REJECT' flow.

@AfterGroup

public void afterGroup(@Output("REJECT") final OutputEmitter<Record> rejected, @LastGroup Boolean last) {

database.pushToStaging(records);

if(last){

List<Data> dataToReject = database.commitBulkLoad();

dataToReject.stream().map(d -> convertToRecord(d)).each(r -> rejected.emit(d));

}

}

When writing tests for components, you can force the maxBatchSize parameter value by setting it with the following syntax: <configuration prefix>.$maxBatchSize=10.

|

You can learn more about processors in this document.

Shortcut syntax for bulk output processors

For the case of output components (not emitting any data) using bulking you can pass the list of records to the @AfterGroup method:

@Processor(name = "DocOutput")

public class DocOutput implements Serializable {

@AfterGroup

public void onCommit(final Collection<Record> records) {

// save records

}

}Defining an output

What is an output

An Output is a Processor that does not return any data.

Conceptually, an output is a data listener. It matches the concept of processor. Being the last component of the execution chain or returning no data makes your processor an output component:

@ElementListener

public void store(final MyData data) {

// ...

}Defining a standalone component logic

Standalone components are the components without input or output flows. They are designed to do actions without reading or processing any data. For example standalone components can be used to create indexes in databases.

Before implementing the component logic and defining its layout and configurable fields, make sure you have specified its basic metadata, as detailed in this document.

Defining component layout and configuration

The component configuration is defined in the <component_name>Configuration.java file of the package. It consists in defining the configurable part of the component that will be displayed in the UI.

To do that, you can specify parameters. When you import the project in your IDE, the parameters that you have specified in the starter are already present.

| All input and output components must reference a dataset in their configuration. Refer to Defining datasets and datastores. |

Parameter name

Components are configured using their constructor parameters. All parameters can be marked with the @Option property, which lets you give a name to them.

For the name to be correct, you must follow these guidelines:

-

Use a valid Java name.

-

Do not include any

.character in it. -

Do not start the name with a

$. -

Defining a name is optional. If you don’t set a specific name, it defaults to the bytecode name. This can require you to compile with a

-parameterflag to avoid ending up with names such as arg0, arg1, and so on.

Examples of option name:

| Option name | Valid |

|---|---|

myName |

|

my_name |

|

my.name |

|

$myName |

Parameter types

Parameter types can be primitives or complex objects with fields decorated with @Option exactly like method parameters.

| It is recommended to use simple models which can be serialized in order to ease serialized component implementations. |

For example:

class FileFormat implements Serializable {

@Option("type")

private FileType type = FileType.CSV;

@Option("max-records")

private int maxRecords = 1024;

}

@PartitionMapper(name = "file-reader")

public MyFileReader(@Option("file-path") final File file,

@Option("file-format") final FileFormat format) {

// ...

}Using this kind of API makes the configuration extensible and component-oriented, which allows you to define all you need.

The instantiation of the parameters is done from the properties passed to the component.

Primitives

A primitive is a class which can be directly converted from a String to the expected type.

It includes all Java primitives, like the String type itself, but also all types with a org.apache.xbean.propertyeditor.Converter:

-

BigDecimal -

BigInteger -

File -

InetAddress -

ObjectName -

URI -

URL -

Pattern -

LocalDateTime -

ZonedDateTime

Mapping complex objects

The conversion from property to object uses the Dot notation.

For example, assuming the method parameter was configured with @Option("file"):

file.path = /home/user/input.csv

file.format = CSVmatches

public class FileOptions {

@Option("path")

private File path;

@Option("format")

private Format format;

}List case

Lists rely on an indexed syntax to define their elements.

For example, assuming that the list parameter is named files and that the elements are of the FileOptions type, you can define a list of two elements as follows:

files[0].path = /home/user/input1.csv

files[0].format = CSV

files[1].path = /home/user/input2.xml

files[1].format = EXCEL

if you desire to override a config to truncate an array, use the index length, for example to truncate previous example to only CSV, you can set:

|

files[length] = 1Map case

Similarly to the list case, the map uses .key[index] and .value[index] to represent its keys and values:

// Map<String, FileOptions>

files.key[0] = first-file

files.value[0].path = /home/user/input1.csv

files.value[0].type = CSV

files.key[1] = second-file

files.value[1].path = /home/user/input2.xml

files.value[1].type = EXCEL// Map<FileOptions, String>

files.key[0].path = /home/user/input1.csv

files.key[0].type = CSV

files.value[0] = first-file

files.key[1].path = /home/user/input2.xml

files.key[1].type = EXCEL

files.value[1] = second-file| Avoid using the Map type. Instead, prefer configuring your component with an object if this is possible. |

Defining Constraints and validations on the configuration

You can use metadata to specify that a field is required or has a minimum size, and so on. This is done using the validation metadata in the org.talend.sdk.component.api.configuration.constraint package:

MaxLength

Ensure the decorated option size is validated with a higher bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Max -

Name:

maxLength -

Parameter Type:

double -

Supported Types: —

java.lang.CharSequence -

Sample:

{

"validation::maxLength":"12.34"

}MinLength

Ensure the decorated option size is validated with a lower bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Min -

Name:

minLength -

Parameter Type:

double -

Supported Types: —

java.lang.CharSequence -

Sample:

{

"validation::minLength":"12.34"

}Pattern

Validate the decorated string with a javascript pattern (even into the Studio).

-

API:

@org.talend.sdk.component.api.configuration.constraint.Pattern -

Name:

pattern -

Parameter Type:

java.lang.string -

Supported Types: —

java.lang.CharSequence -

Sample:

{

"validation::pattern":"test"

}Max

Ensure the decorated option size is validated with a higher bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Max -

Name:

max -

Parameter Type:

double -

Supported Types: —

java.lang.Number—int—short—byte—long—double—float -

Sample:

{

"validation::max":"12.34"

}Min

Ensure the decorated option size is validated with a lower bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Min -

Name:

min -

Parameter Type:

double -

Supported Types: —

java.lang.Number—int—short—byte—long—double—float -

Sample:

{

"validation::min":"12.34"

}Required

Mark the field as being mandatory.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Required -

Name:

required -

Parameter Type:

- -

Supported Types: —

java.lang.Object -

Sample:

{

"validation::required":"true"

}MaxItems

Ensure the decorated option size is validated with a higher bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Max -

Name:

maxItems -

Parameter Type:

double -

Supported Types: —

java.util.Collection -

Sample:

{

"validation::maxItems":"12.34"

}MinItems

Ensure the decorated option size is validated with a lower bound.

-

API:

@org.talend.sdk.component.api.configuration.constraint.Min -

Name:

minItems -

Parameter Type:

double -

Supported Types: —

java.util.Collection -

Sample:

{

"validation::minItems":"12.34"

}UniqueItems

Ensure the elements of the collection must be distinct (kind of set).

-

API:

@org.talend.sdk.component.api.configuration.constraint.Uniques -

Name:

uniqueItems -

Parameter Type:

- -

Supported Types: —

java.util.Collection -

Sample:

{

"validation::uniqueItems":"true"

}

When using the programmatic API, metadata is prefixed by tcomp::. This prefix is stripped in the web for convenience, and the table above uses the web keys.

|

Also note that these validations are executed before the runtime is started (when loading the component instance) and that the execution will fail if they don’t pass.

If it breaks your application, you can disable that validation on the JVM by setting the system property talend.component.configuration.validation.skip to true.

Defining datasets and datastores

Datasets and datastores are configuration types that define how and where to pull the data from. They are used at design time to create shared configurations that can be stored and used at runtime.

All connectors (input and output components) created using Talend Component Kit must reference a valid dataset. Each dataset must reference a datastore.

-

Datastore: The data you need to connect to the backend.

-

Dataset: A datastore coupled with the data you need to execute an action.

|

Make sure that:

These rules are enforced by the |

Defining a datastore

A datastore defines the information required to connect to a data source. For example, it can be made of:

-

a URL

-

a username

-

a password.

You can specify a datastore and its context of use (in which dataset, etc.) from the Component Kit Starter.